Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: When Surveillance Meets Reality

How highly accurate models still produce noisy, unreliable surveillance systems—and why understanding begins after detection.

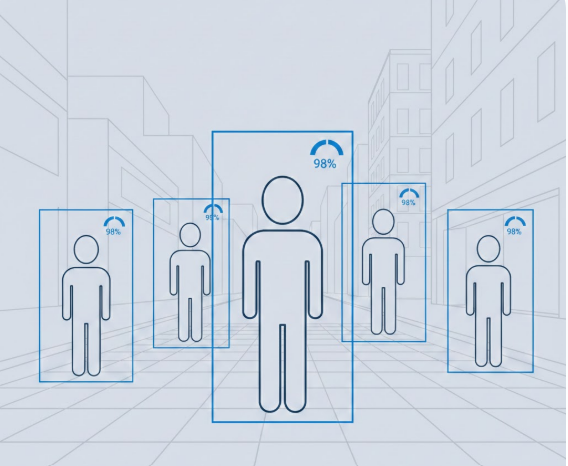

One of the first metrics people look at in a surveillance system is detection accuracy.

“How accurate is the model?”

“Does it detect people reliably?”

“What percentage does it achieve?”

At first glance, this makes sense.

If the system sees correctly, intelligence should follow.

In practice, this assumption fails more often than it succeeds.

Object detection models answer a very specific question:

“What objects are present in this frame?”

Modern models do this extremely well.

They can identify people, vehicles, bags, animals—often with impressive confidence.

But accuracy only measures visibility, not relevance.

A system can be perfectly accurate and still be completely unhelpful.

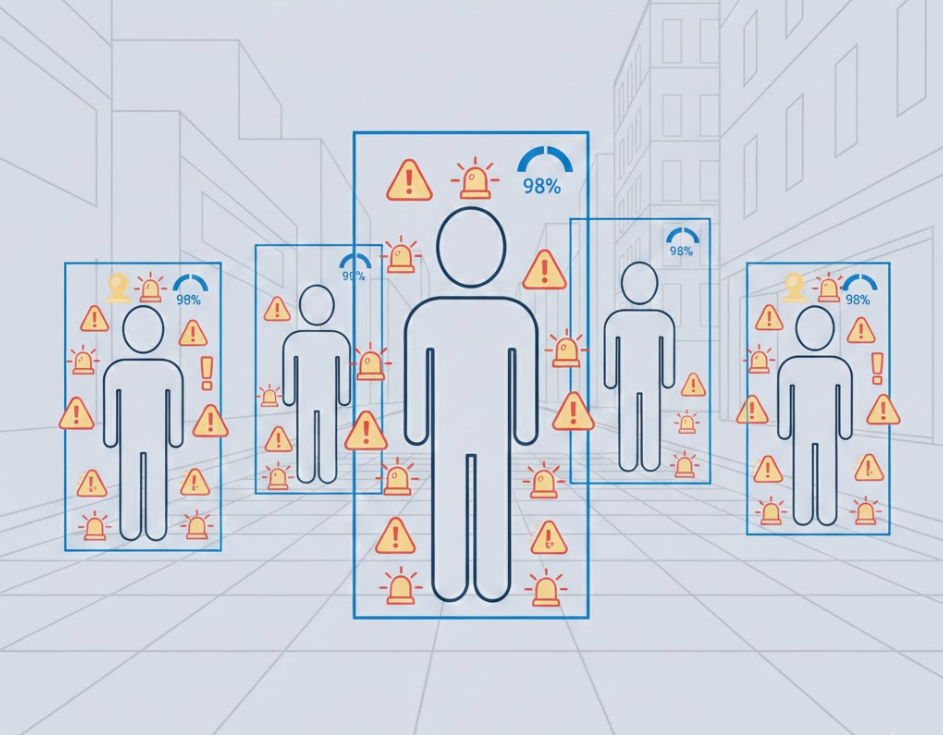

In real surveillance deployments, the problem is rarely missed detections.

The real problem is unnecessary reactions.

A person walking past a camera may be detected correctly.

So may a person waiting.

So may a person turning around, bending, or pausing briefly.

All detections are accurate.

But not all detections matter.

This is where intelligence begins to diverge from accuracy.

This distinction is critical—and often misunderstood.

Most real-world failures come from the second category.

A system can have:

…and still generate alerts that operators ignore.

Why?

Because correct detections are being interpreted without context.

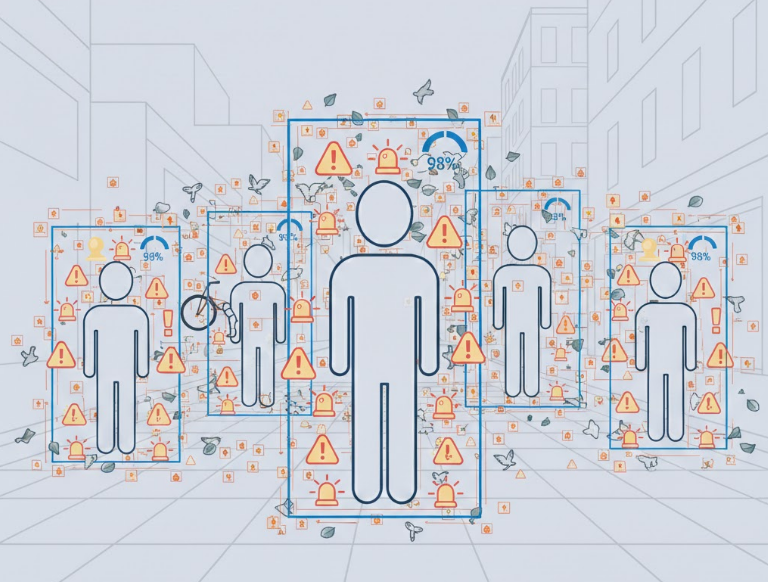

A common response is to tune confidence thresholds.

“If the confidence is low, ignore it.”

“If it’s high, trigger an alert.”

This improves stability slightly—but does not fix the core issue.

Confidence measures how sure the model is that something exists.

It does not measure whether that thing is important.

Importance comes from:

None of these are encoded in detection confidence.

Ironically, better models often make systems noisier.

As detection improves:

Without strong filtering and reasoning, this creates:

The system sees more—but understands less.

Detection is the first step, not the decision.

Real intelligence appears only when detections are combined with:

Detection answers what exists.

Intelligence answers what matters.

Instead of asking:

“How accurate is the detector?”

A better question is:

“How many detections does the system correctly ignore?”

Ignoring irrelevant information is not a weakness.

It is a sign of maturity.

High detection accuracy is necessary—but never sufficient.

Surveillance systems fail not because they cannot see,

but because they react without understanding.

Intelligence does not come from seeing more.

It comes from deciding less—and deciding better.

Next in Series: Why Motion Is the Most Misleading Signal in Video