Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: When Surveillance Meets Reality

Previous: https://varsity.thopps.com/why-motion-is-the-most-misleading-signal-in-video

How identity, time, and context turn detections into behaviour

At first, the system was proud of itself.

It saw a frame.

It drew a box.

It named the object.

It attached a confidence score.

Success.

Another frame arrived.

Another box.

Another label.

Another success.

From the system’s point of view, it was doing its job perfectly.

From a human’s point of view, nothing meaningful was happening.

Most vision systems confuse seeing with understanding.

A detection pipeline answers a very specific, very narrow question:

What exists in this frame, right now?

And then it moves on.

No memory.

No continuity.

No curiosity.

A detection lives for one frame and then disappears.

It doesn’t know if it existed before.

It doesn’t care if it will exist after.

Technically speaking, a detection has:

It is a moment — not a story.

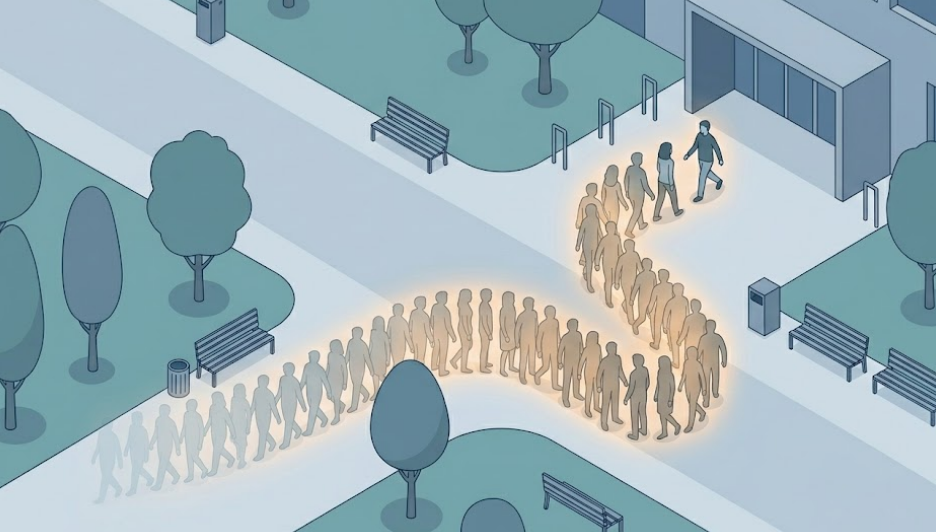

A person loitering does not exist in any single image.

There is no frame where “loitering” suddenly becomes visible.

What exists instead are fragments:

Only when these detections are linked across time does something new emerge.

Behaviour is not a property of frames.

It is a property of sequences.

Duration.

Repetition.

Direction.

Interaction.

These are temporal signals — and detection, by design, has no concept of time.

This is why many surveillance systems feel overwhelming.

They are constantly shouting:

person detected

person detected

person detected

Nothing has changed — yet alerts keep firing.

Because without time, everything looks urgent.

Without memory, everything looks new.

From a system perspective:

So the system reacts instead of observing.

Technically, it’s responsive.

Practically, it’s blind.

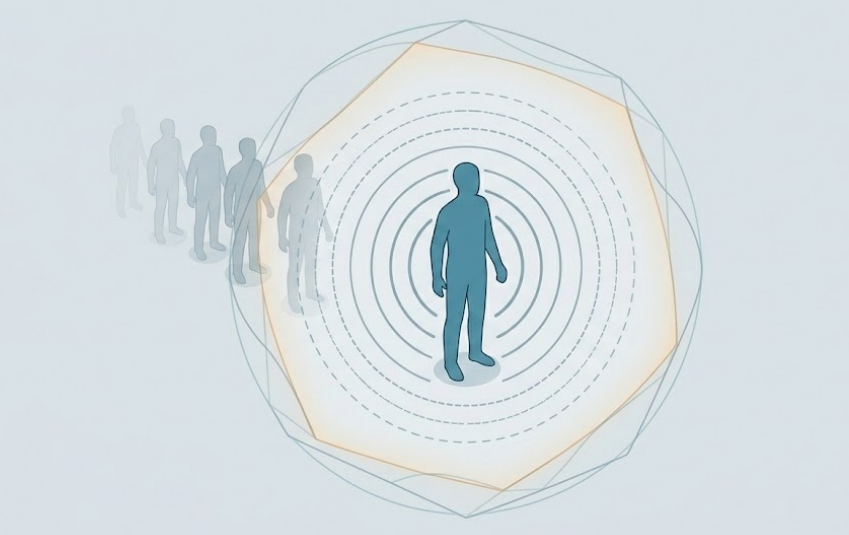

Detection becomes behaviour only when three things align.

Identity

“This is the same entity I observed earlier.”

This requires tracking, re-identification, or at least temporal association.

Time

“How long has this persisted? How often has it repeated?”

This introduces state, buffers, windows, decay functions.

Context

“Where is this happening — and does it matter here?”

Zones, rules, expectations, intent.

Only when these converge can a system infer things like:

None of these are detectable signals.

They are patterns over time.

Raising confidence thresholds feels like improvement.

It isn’t.

A single high-confidence detection means very little.

A low-confidence detection repeating for two minutes often means everything.

Behaviour is not about precision.

It is about consistency.

This is why behaviour systems care less about how accurate a frame is

and more about how stable a pattern becomes.

An event is not detected.

It is inferred.

Only after:

does an event earn meaning.

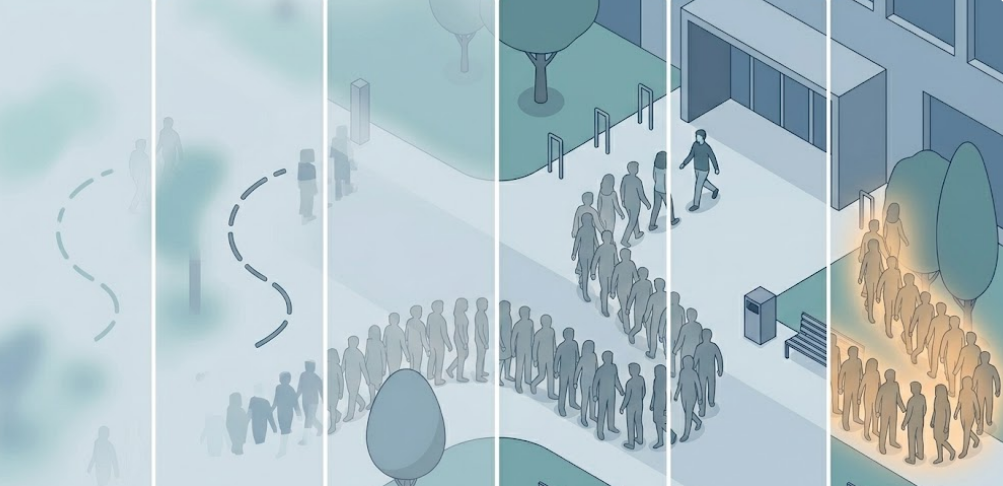

Well-designed systems delay decisions not because they are slow —

but because they are careful.

They wait for the story to finish telling itself.

Detection is the alphabet of vision systems.

Behaviour is the language.

You can detect everything

and still understand nothing.

Understanding begins when systems stop asking:

“What is in this frame?”

and start asking:

“What is this entity doing over time?”

Behaviour is not a feature you toggle on.

It is something that emerges.

Understanding doesn’t happen in a frame.

It happens when time is allowed to speak.

Behaviour emerges when systems learn to wait.

What remains is to examine the quiet assumptions vision systems make before behaviour can even begin.

Next in Series: What Changes When Behaviour Becomes the Goal