Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Understanding Visual Perception in Surveillance Systems

Previous: https://varsity.thopps.com/when-motion-isnt-really-motion

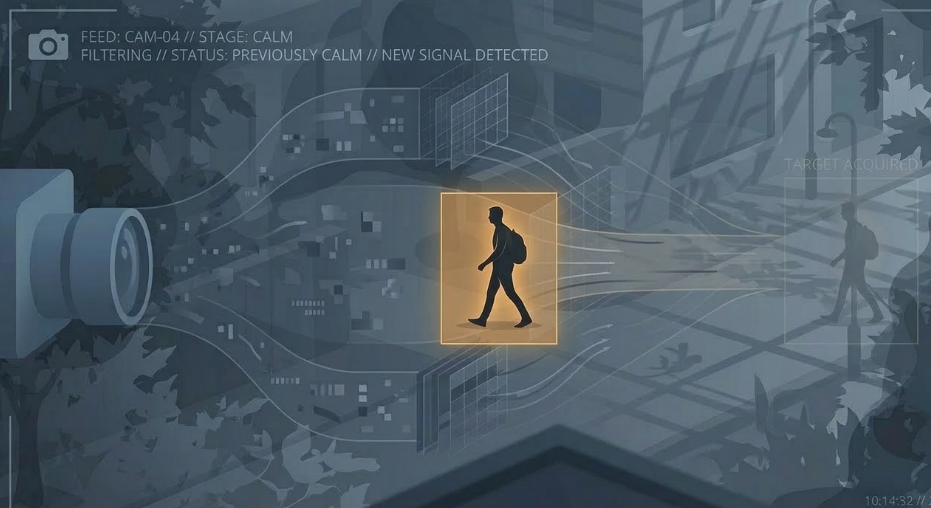

Understanding how video analytics separates persistent activity from environmental variation

After understanding how cameras perceive scenes, how video is composed of frames, and why pixels constantly fluctuate, one important challenge remains.

How does a surveillance system decide what actually matters in a scene?

A camera observes everything — shifting light, moving shadows, subtle reflections.

Yet most of these changes are not meaningful activity.

For surveillance systems to work reliably, they must learn to separate foreground events from the constantly changing background.

Humans instinctively separate a scene into two parts.

The environment — walls, floors, trees, and structures that define the space.

And the actors — people, vehicles, or objects moving through it.

Our attention immediately goes to activity.

For a computer vision system, this distinction does not exist initially.

Everything begins as changing pixels.

Foreground and background must be learned from patterns over time.

Background does not mean “unchanging.”

Even empty scenes contain constant variation:

lighting fluctuations

sensor noise

compression artifacts

subtle environmental motion

Because of this, backgrounds are defined not by stillness, but by predictable patterns of change.

Systems learn what normally fluctuates in a scene and treat it as expected behavior.

Foreground activity appears when a new and persistent change enters the environment.

A person crossing a hallway.

A vehicle entering a parking lot.

An object placed on the floor.

These changes stand out because they do not match the learned background patterns.

However, this distinction may initially be subtle, especially for distant or slow-moving objects.

Foreground detection rarely relies on a single frame.

Instead, systems evaluate temporal consistency.

Brief changes are often ignored as noise.

Persistent changes across multiple frames become more significant.

By observing patterns over time, systems stabilize their interpretation of the scene.

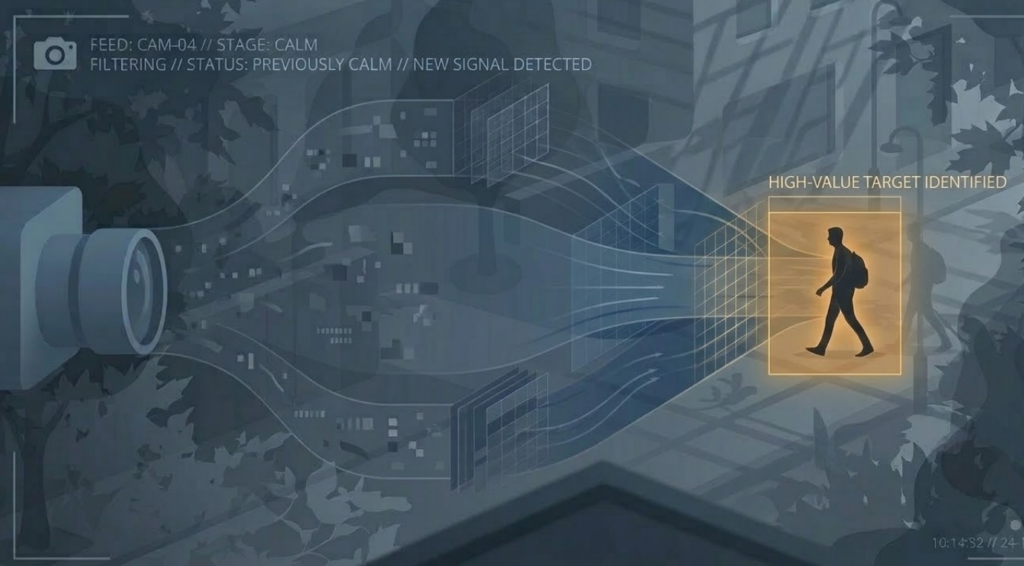

In production pipelines, foreground separation usually happens before higher-level analysis.

Typical systems include components such as:

RTSP camera streams

FFmpeg for video decoding

OpenCV for frame processing

AI models for detection

Foreground extraction helps narrow the system’s focus to regions where meaningful activity is likely occurring.

The distinction between foreground and background can change.

A parked vehicle may eventually become part of the background if it remains long enough.

When it begins moving again, it returns to the foreground.

Surveillance systems therefore treat background as dynamic, continuously adapting to changes in the environment.

Effective surveillance is not about detecting everything.

It is about ignoring what does not matter.

Without filtering, systems would react to every lighting change, shadow movement, or sensor fluctuation.

Background modelling allows systems to focus attention only on changes that deviate from normal patterns.

Separating foreground from background allows surveillance systems to transform noisy pixel changes into meaningful observations.

By learning what normally belongs to the environment, systems can identify when something truly changes.

In the end, intelligent surveillance is not built by reacting to everything a camera sees.

It emerges from learning what can safely be ignored.

Surveillance systems don’t understand scenes instantly — they build understanding from noisy, incomplete visual fragments.

Intelligence emerges when systems learn to ignore what changes constantly and focus on what truly matters.

First in Series : https://varsity.thopps.com/why-cameras-see-differently-than-humans