Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Foundations of Smart Surveillance

A learning story about seeing, understanding, and intelligence

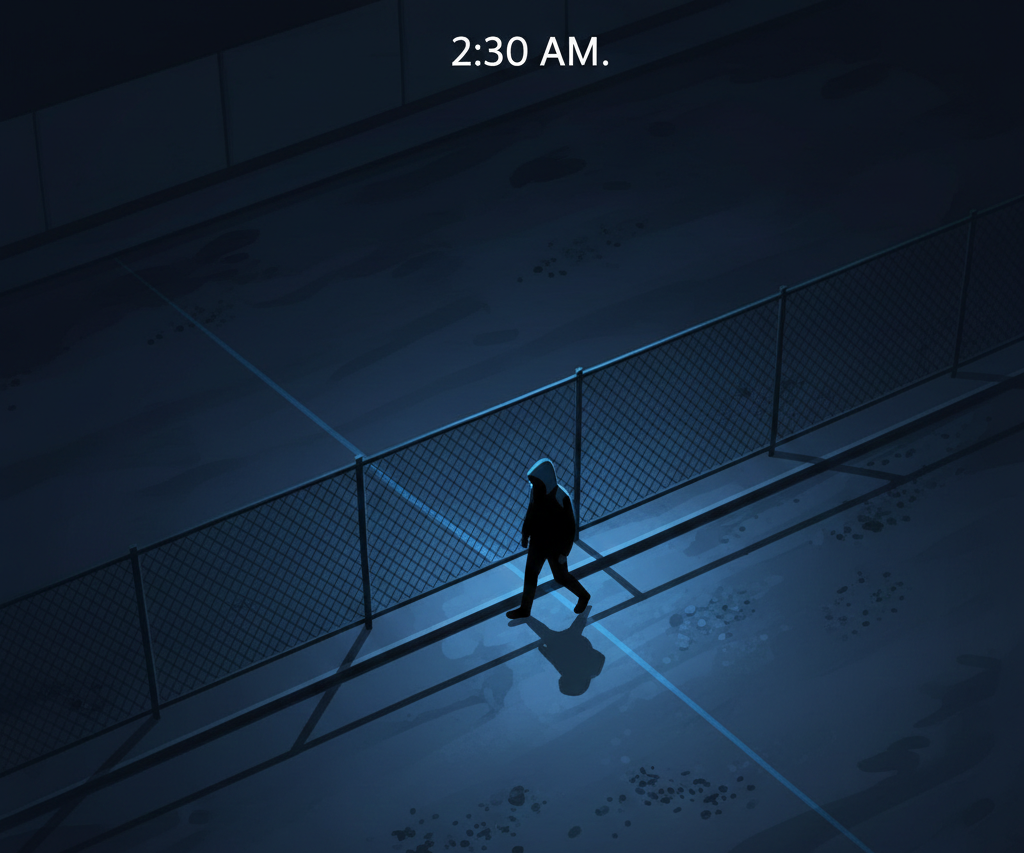

It was 2:30 AM.

The camera recorded everything.

A person entered the area, walked slowly along the boundary, paused near a restricted section, and left.

When the footage was reviewed later, the question wasn’t what happened.

It was:

Why didn’t the system react?

The camera had done its job perfectly.

But the system had not understood the situation.

That moment reveals an important lesson:

Seeing is not the same as understanding.

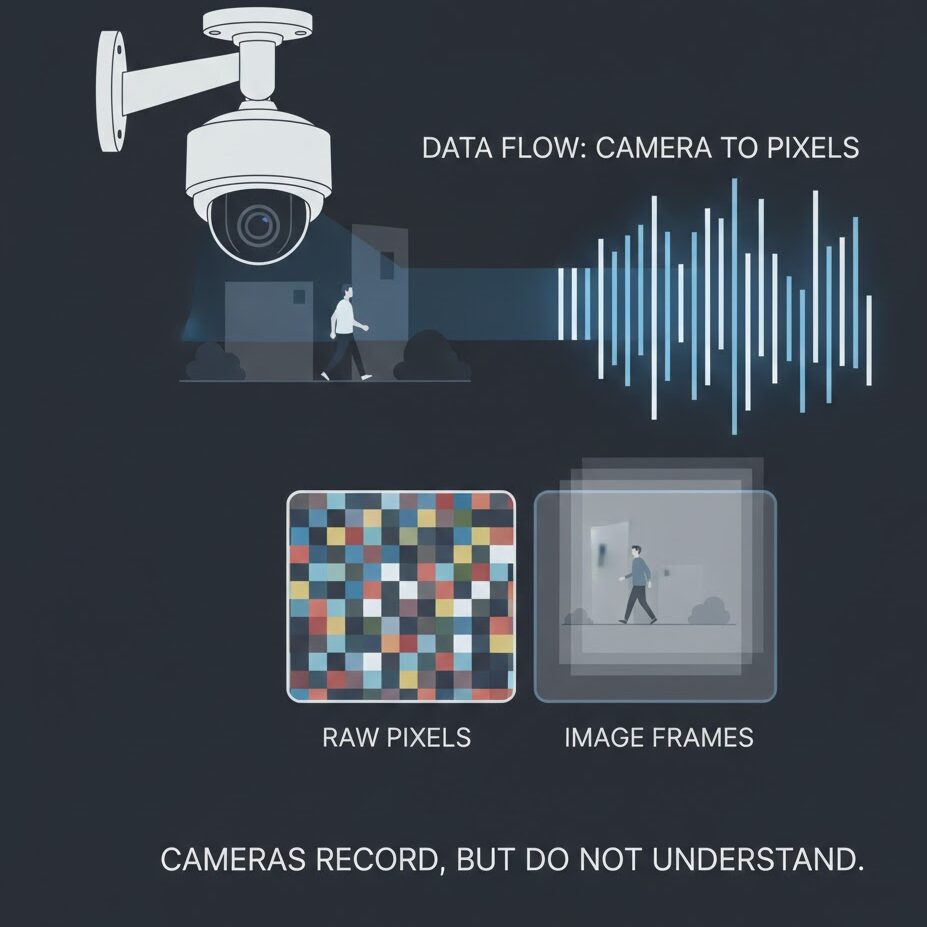

When we talk about surveillance, we often assume that cameras themselves are intelligent.

They are not.

A camera is only a sensor.

It captures visual information, converts it into pixels, and stores frames.

No matter how advanced it looks, a camera does not understand:

It does not know why something matters.

It only records what appears.

Understanding must come from systems built on top of the camera.

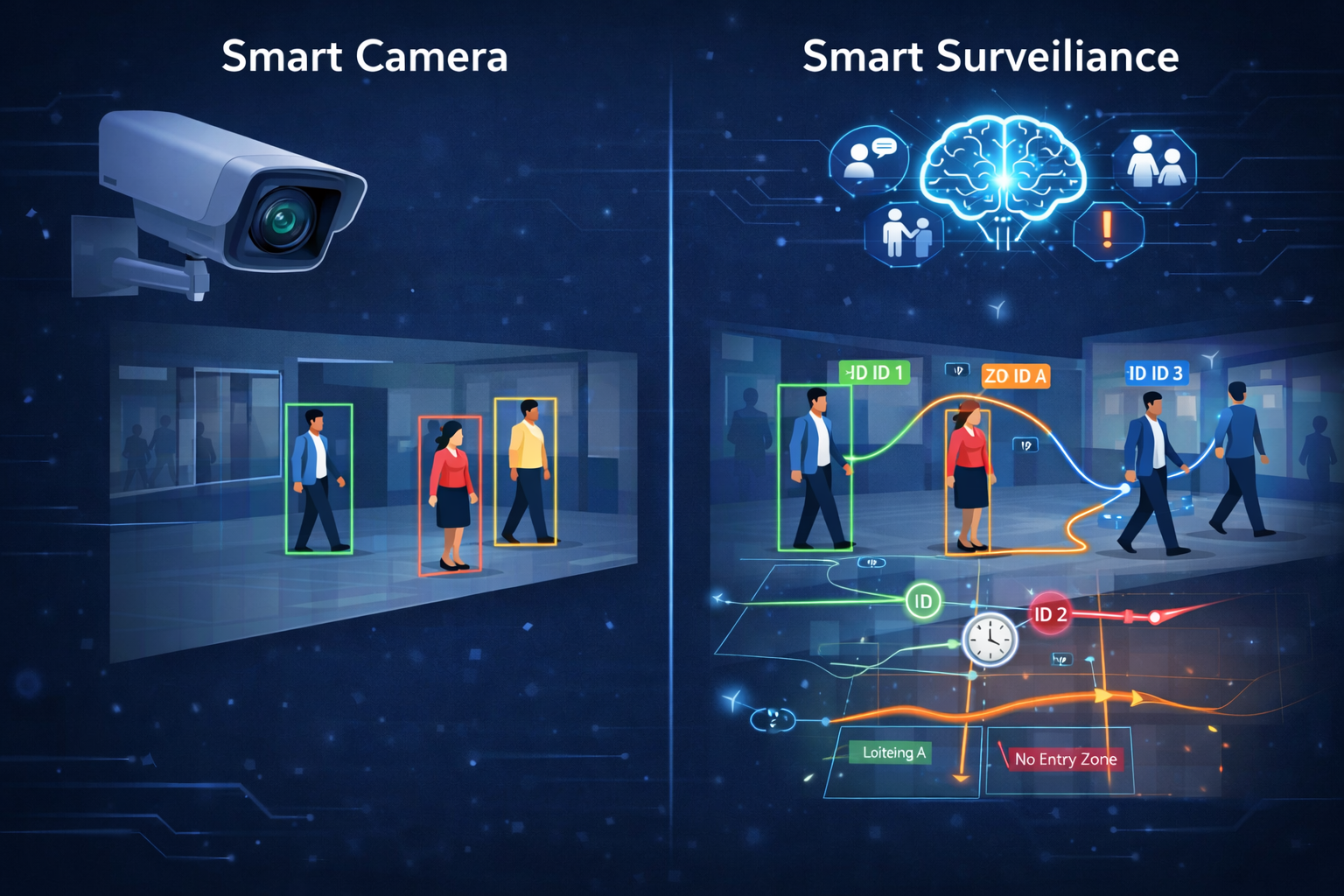

A smart camera improves traditional CCTV by adding basic visual analysis.

It can detect elements such as:

This is already a significant improvement over simple recording.

However, this intelligence is limited.

A smart camera analyzes video frame by frame, treating each moment independently.

It has no memory.

No history.

No context.

As a result, every detection appears equally important — even when it is not.

Consider a simple situation:

A person walking across a corridor.

Is this normal?

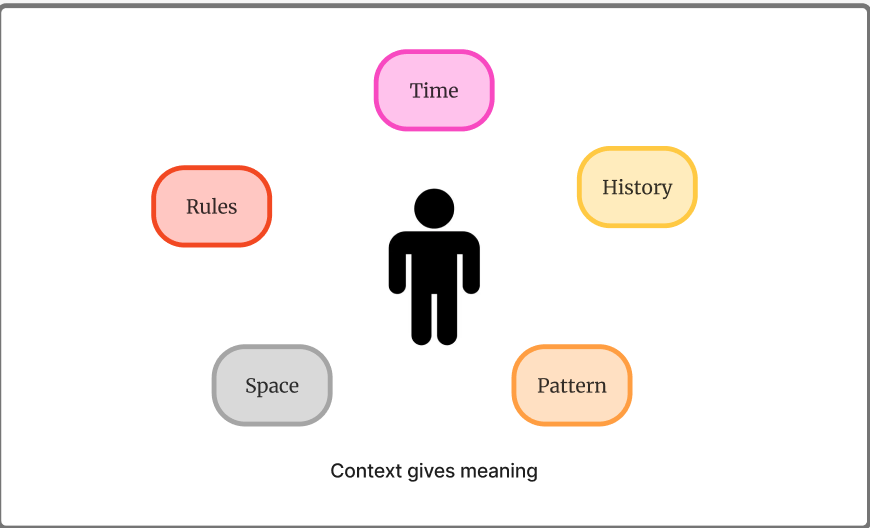

The answer depends on several factors:

A camera cannot evaluate these conditions.

It sees only a person — not the circumstances around them.

This is where most false alerts originate.

Not because detection is incorrect,

but because interpretation is missing.

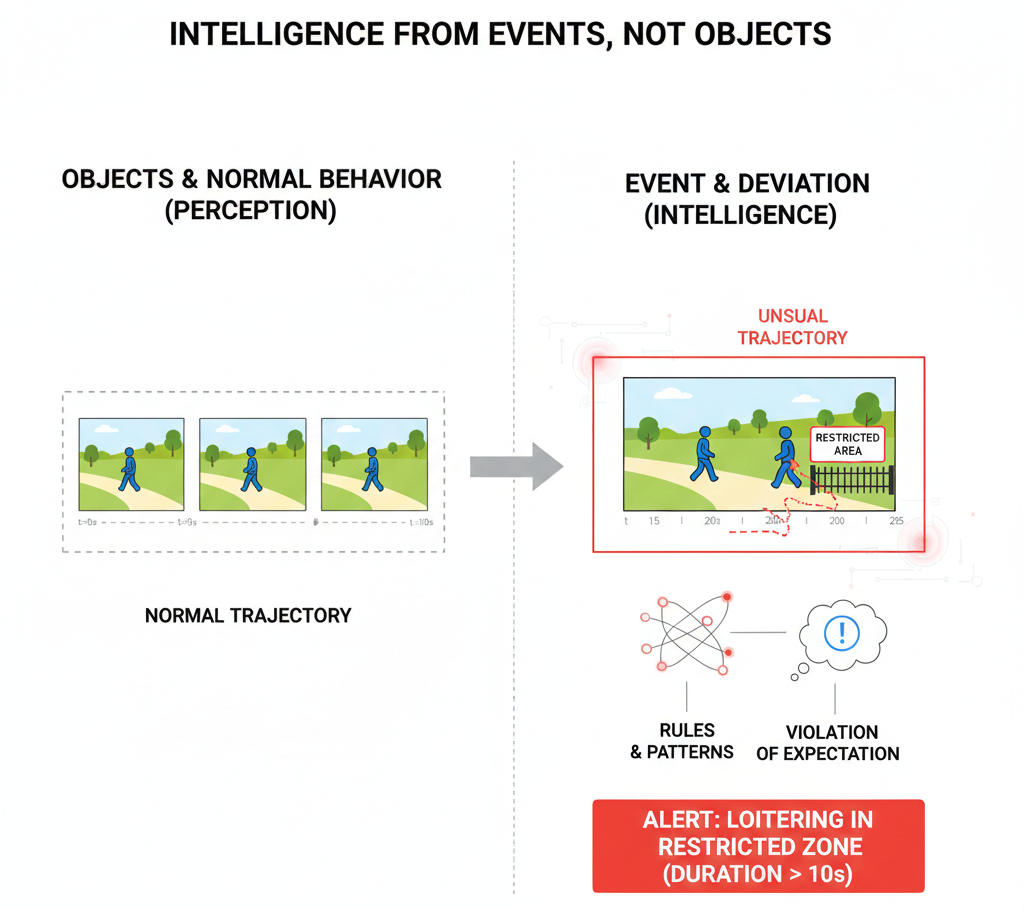

The key learning was this:

Surveillance should not focus on detecting objects.

It should focus on understanding events.

An event is not something that appears in a single frame.

An event is something that violates expectation.

That expectation comes from rules, time, and patterns.

Once this became clear, the difference between smart cameras and smart surveillance was easy to understand.

Smart surveillance does not simply ask:

“Is there a person?”

Instead, it asks more meaningful questions:

Context turns raw detection into understanding.

Without context, intelligence cannot exist.

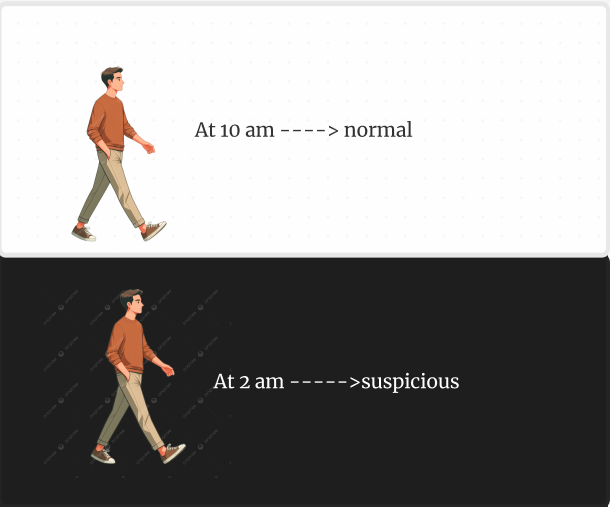

The same action can have very different meanings at different times.

Walking through an area at 10 AM may be routine.

The same movement at 2 AM may be unusual.

Smart surveillance understands this difference.

It evaluates activity using time-based context such as:

The system does not react to activity itself —

it reacts to activity that occurs outside expectation.

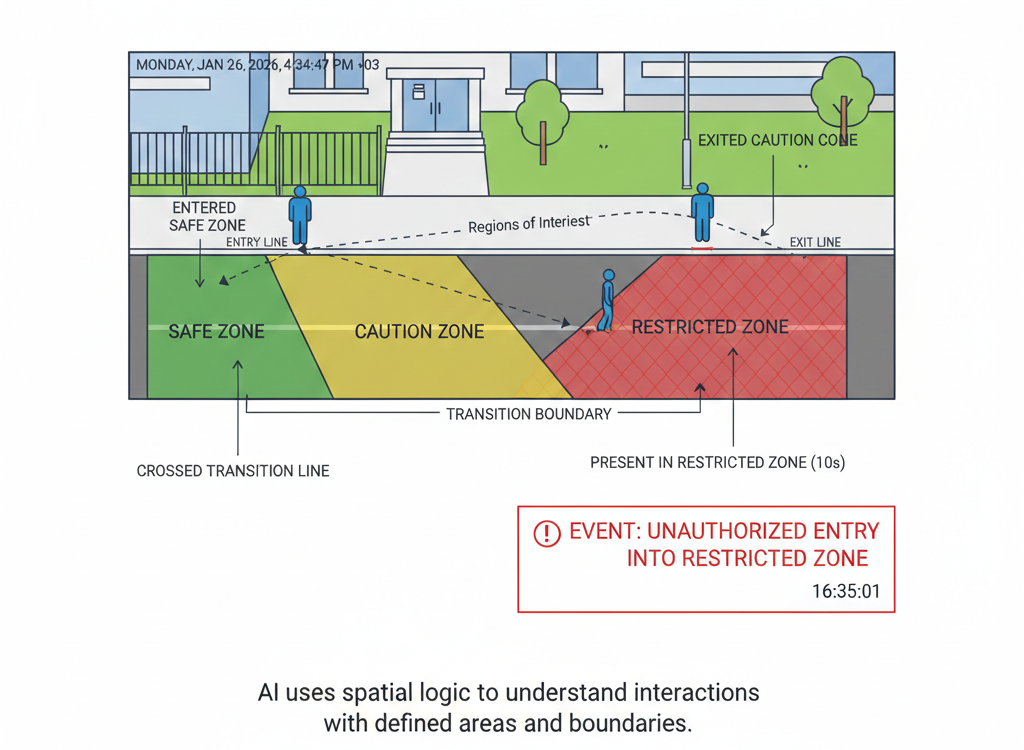

Smart surveillance also understands space.

Not just coordinates, but purpose.

Areas can be defined as:

Crossing a line or entering a zone is no longer just movement.

It becomes a meaningful transition.

Space adds structure to observation.

Objects tell us what is present.

Behavior tells us what is happening.

Smart surveillance focuses on behavior rather than isolated detections, such as:

Behavior cannot be identified from a single image.

It emerges only when multiple frames are connected and observed over time

At this point, a natural question arises:

If cameras only see individual frames, how does smart surveillance understand behavior?

The answer lies in connecting time together.

A single frame shows presence.

Understanding appears only when frames are linked.

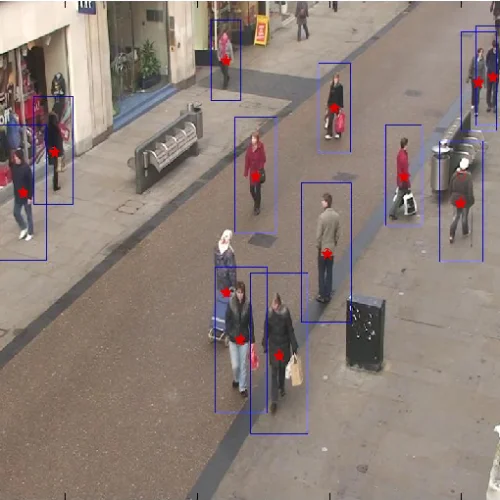

Instead of analyzing images independently, smart surveillance systems track objects across frames.

A detected person is no longer just a bounding box.

It becomes an identity.

That identity moves.

Over time, the system can observe:

This process is known as object tracking.

Tracking is what transforms vision into motion understanding.

Without tracking:

With tracking:

Loitering, for example, cannot be detected in a single frame.

It exists only across time.

Some systems rely on simple rules, such as:

“If a person enters this zone, raise an alert.”

Rules are useful — but they are not sufficient.

Smart surveillance combines multiple layers:

Rules define boundaries.

Tracking provides continuity.

Time gives meaning.

Only together do they create understanding.

This understanding is not produced by a single model or algorithm.

Smart surveillance works as a pipeline, where each layer contributes a specific form of intelligence.

At the foundation are object detection models such as:

These models identify objects like people and vehicles in each frame.

Their role is visibility — not understanding.

They answer one question:

“What is present in this frame?”

Tracking algorithms connect detections across frames, such as:

Tracking assigns persistent identities to objects.

This enables:

Tracking introduces time awareness into the system.

Regions of interest define how space is interpreted:

Geometric logic determines whether an object:

This is where space gains meaning.

Time-based logic evaluates patterns such as:

Temporal reasoning converts movement into behavior.

Rules combine multiple signals:

Only when these conditions align does an event occur.

This reduces noise and increases confidence.

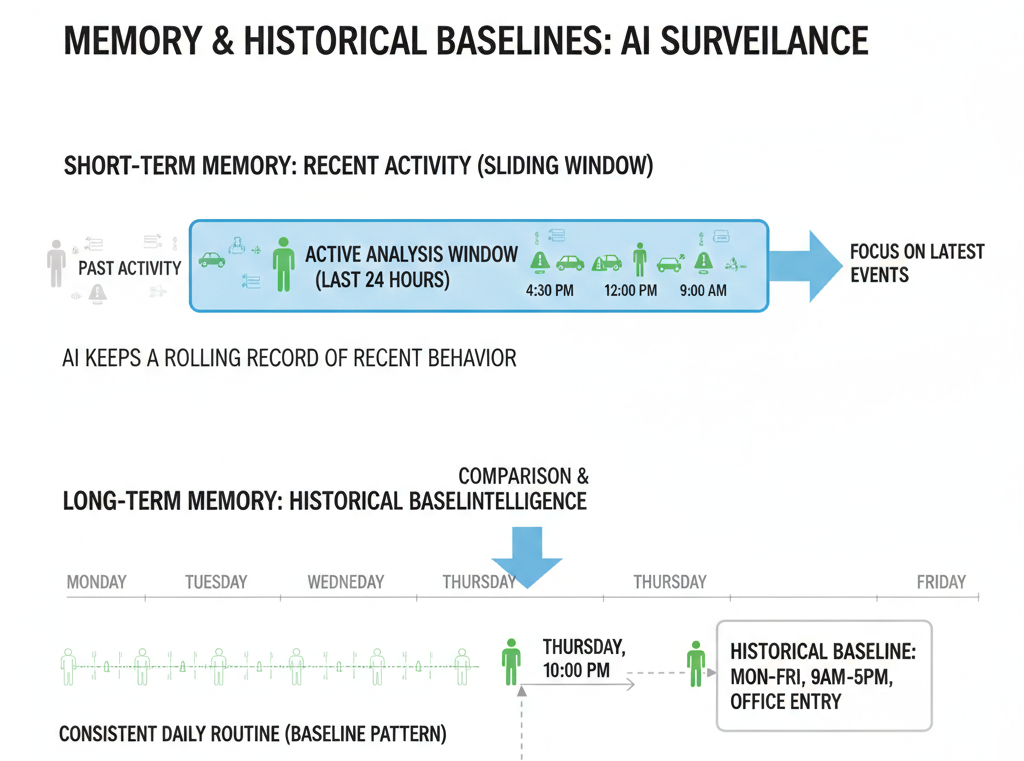

Smart systems maintain short-term and long-term memory, including:

This allows detection of anomalies — not based on rarity, but on deviation.

Finally, systems translate detections into human-understandable output:

This layer bridges machine perception and human understanding.

Understanding smart surveillance requires thinking in layers.

No single algorithm makes a system intelligent.

Intelligence emerges from how different components interact over time.

Once this becomes clear, surveillance no longer feels mysterious.

It becomes a structured reasoning system.

Cameras help us see.

Intelligence helps us decide.

True surveillance is not about watching everything.

It is about recognizing when something is not right —

even when it appears ordinary.

That is the key lesson.

And once you understand how vision becomes reasoning,

you begin to see surveillance systems in a completely different way.

Now that we’ve understood the difference between seeing and understanding, the next step is to explore how systems actually build that understanding.

In the next article, we’ll look at detection, tracking, and behaviour — and why object detection alone is not intelligence.

Next in Series: The Three Layers of Vision Intelligence