Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Understanding Visual Perception in Surveillance Systems

Previous: https://varsity.thopps.com/why-a-still-scene-is-never-truly-still

Why surveillance systems don’t actually “watch” video — they analyse frames.

In surveillance systems, motion is often treated as the starting point of understanding.

If something moves, something must be happening.

But in real video feeds, movement is not always what it appears to be.

Before intelligence can interpret activity, systems must first answer a more basic question:

Is this motion real — or is it just visual change?

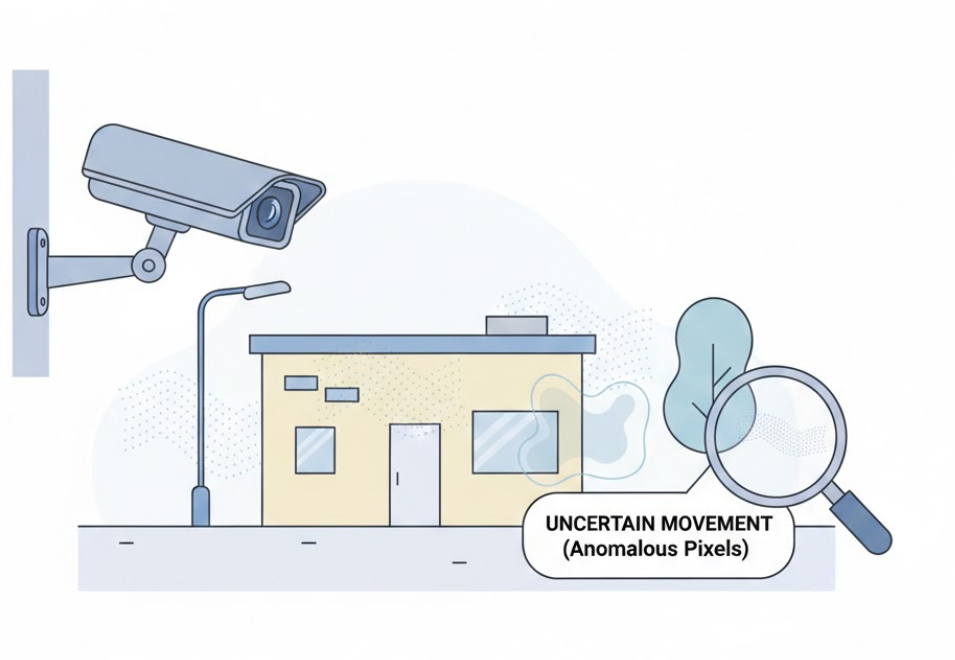

At the lowest level, surveillance systems do not detect motion directly.

They detect change.

Each frame is compared with the next, and any difference in pixel values is treated as potential activity.

This process — often called frame differencing — forms the foundation of motion perception.

But pixel change does not always mean physical movement.

A slight brightness shift can modify thousands of pixels at once.

To the system, that looks like motion — even when nothing moved.

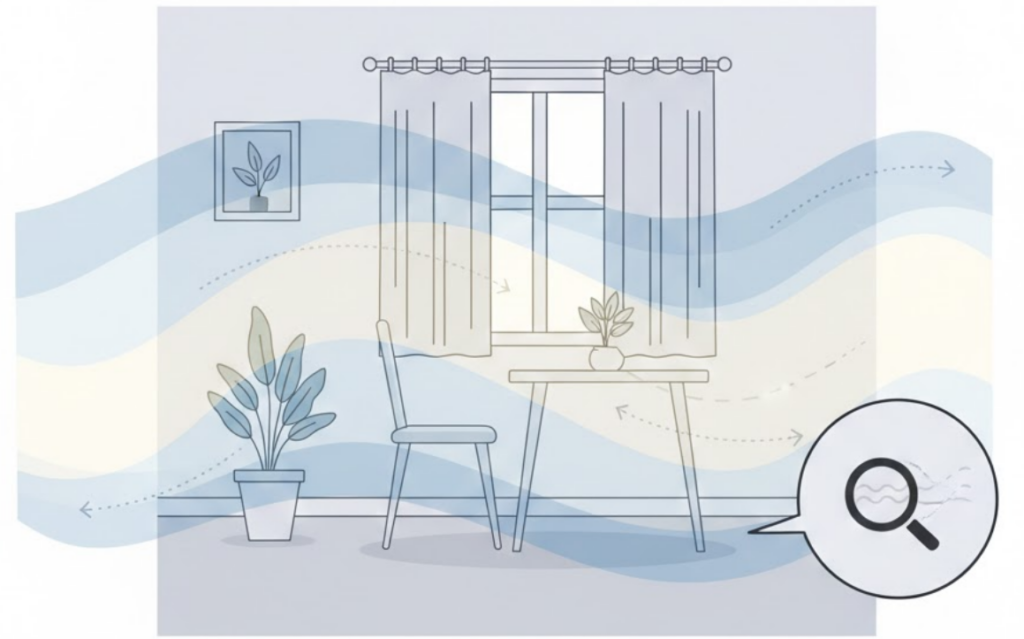

Lighting is one of the most common sources of false motion.

Examples include:

These changes affect large regions of the image simultaneously.

Humans adapt instantly and barely notice.

Cameras record every fluctuation precisely.

As a result, motion can appear even in empty scenes.

Shadows are especially difficult.

A moving shadow changes pixel intensity and shape, often resembling an actual object.

From a visual standpoint:

To a basic motion detector, they are indistinguishable from real movement.

This is why shadow handling becomes an important consideration in video analytics — not because shadows are complex, but because they closely imitate object behaviour at the pixel level.

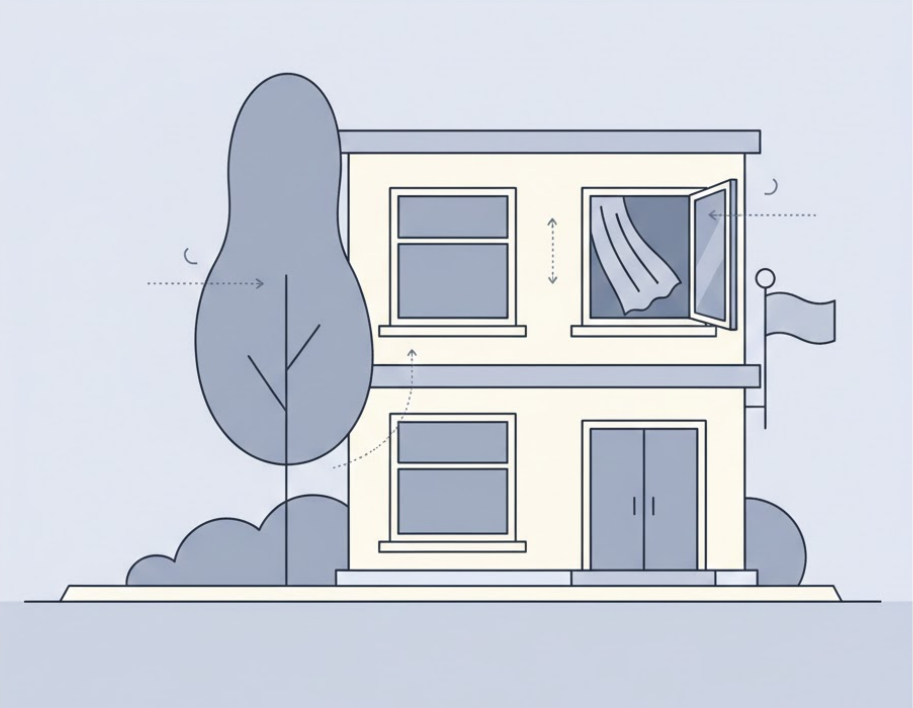

Real environments are rarely static.

Common sources of background motion include:

These elements produce consistent motion patterns that exist even when no meaningful activity is present.

From the system’s perspective, the background itself appears alive.

Distinguishing foreground activity from background variation becomes essential.

Some motion appears large simply because of camera geometry.

Insects flying close to the lens can occupy many pixels.

Rain or snow can appear as rapid pixel flashes across frames.

Though physically insignificant, these elements generate strong visual change.

This mismatch between physical size and visual impact is one of the core challenges of camera-based perception.

In real surveillance pipelines — often built using:

these disturbances appear continuously.

Without proper handling, systems may produce:

These behaviors do not indicate poor design.

They reflect the raw complexity of visual input.

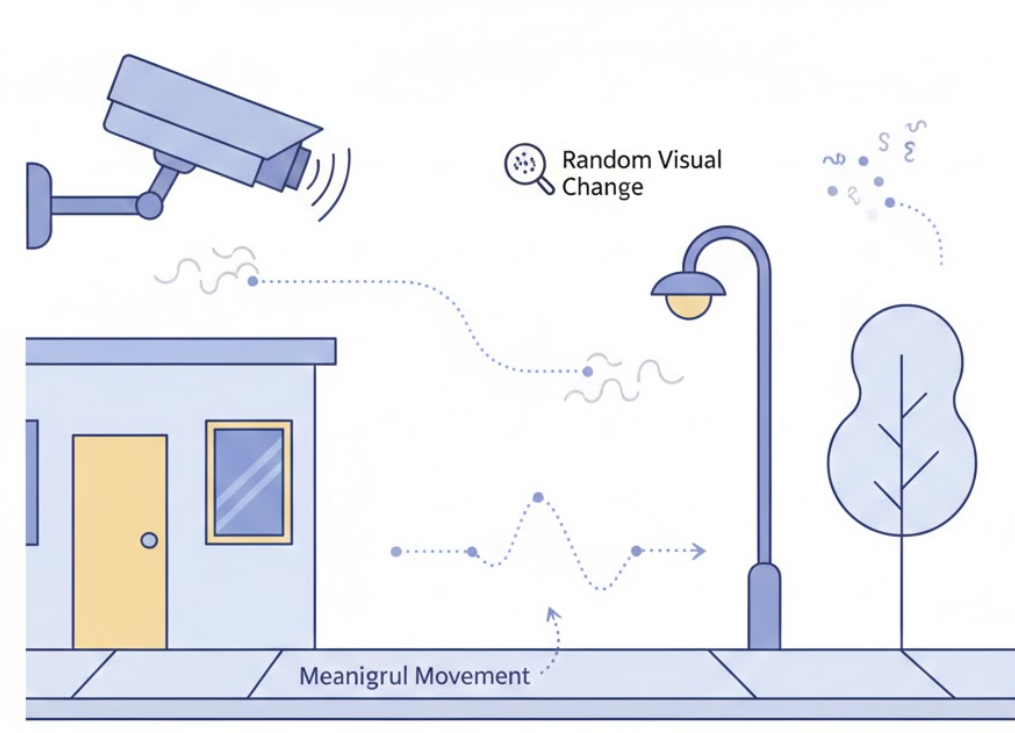

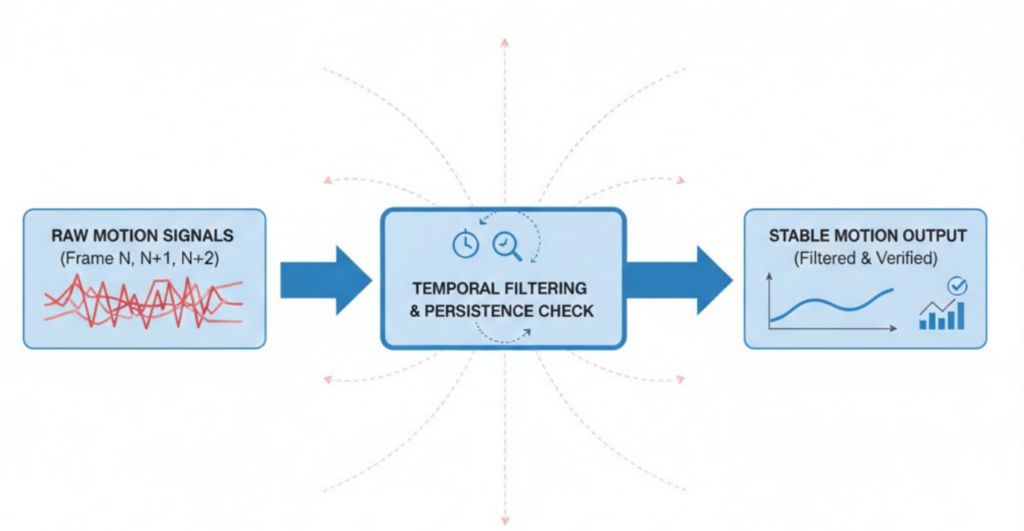

Well-designed systems do not react to every pixel change.

Instead, they evaluate motion based on characteristics such as:

Short-lived or scattered changes are treated as noise.

Sustained and structured movement is evaluated further.

This layered filtering allows systems to remain sensitive without becoming reactive.

Recognizing that not all motion is meaningful changes how surveillance systems are designed.

Motion is not treated as an event — it is treated as a signal.

Only when that signal shows consistency, structure, and continuity do it become relevant.

This is how systems avoid reacting to every flicker while remaining attentive to real activity.

Not every visual change represents real movement.

Understanding this distinction is essential before motion can become meaningful information.

Next in Series: Separating Foreground from Background