Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Understanding Visual Perception in Surveillance Systems

Previous: https://varsity.thopps.com/the-illusion-of-continuous-video

Understanding pixel instability, noise, and visual fluctuations in surveillance video.

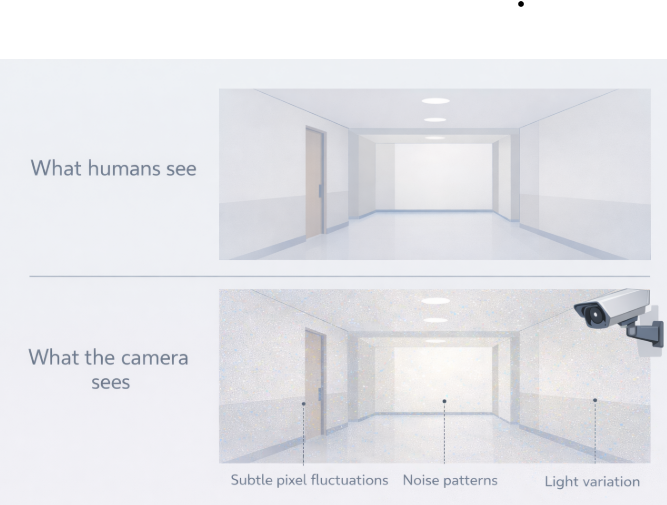

When we look at a surveillance feed and nothing seems to be happening, we naturally assume the scene is static.

No people.

No movement.

No activity.

To a human observer, the environment feels completely still.

But to a video analytics system, that same scene is quietly changing all the time.

Human vision is excellent at ignoring small variations.

Our eyes adapt to lighting changes instantly.

Our brain filters flicker, noise, and repetition.

Unless something meaningful changes, we consider the scene stable.

This ability allows humans to focus on what matters — and ignore everything else.

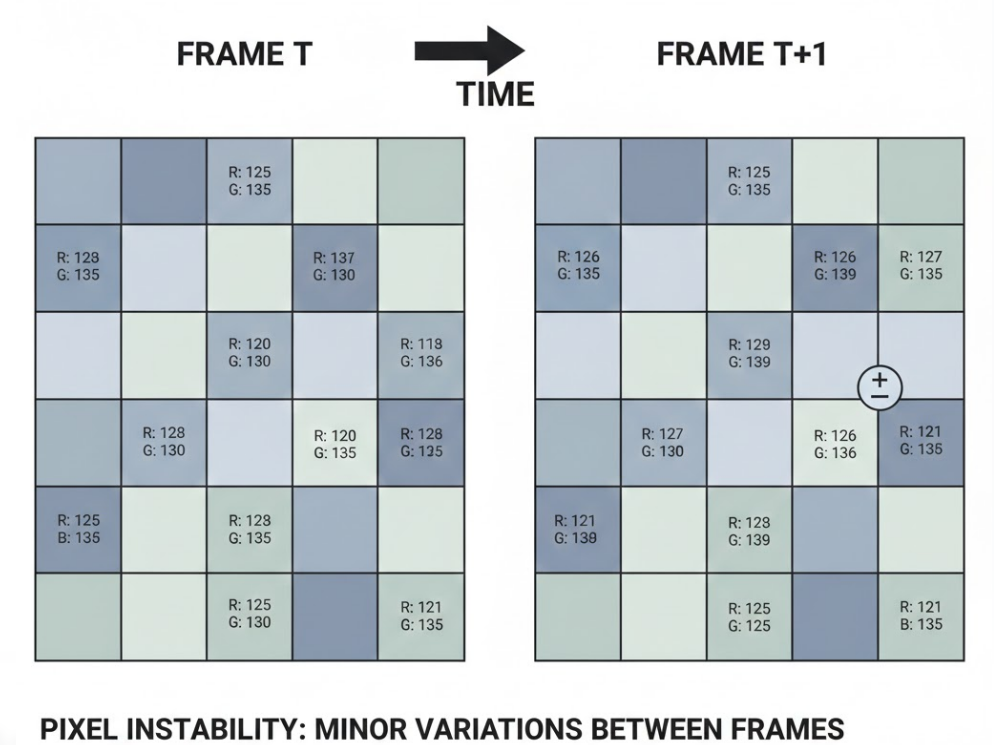

A pixel in a video frame is not a constant value.

Even when the camera is pointed at an empty hallway, pixel values subtly fluctuate.

These changes come from:

The result is that two frames captured milliseconds apart are never identical.

To the system, the scene is always slightly different.

Lighting is one of the biggest sources of pixel variation.

Small changes — such as:

are almost invisible to humans.

But cameras record them precisely.

A slight brightness change can modify thousands of pixel values at once.

To a system analysing frames, this can resemble motion — even though nothing moved.

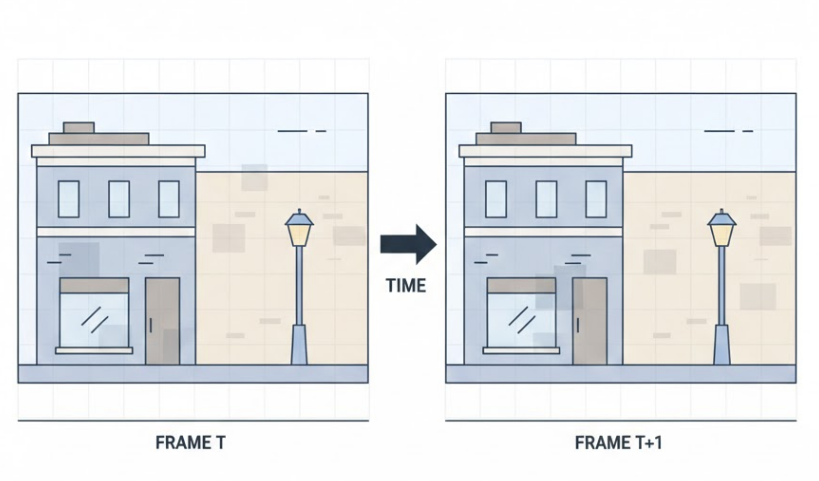

Most surveillance cameras do not send raw video.

They use compression formats such as H.264 or H.265 to reduce bandwidth.

Compression works by approximating changes between frames.

While efficient, this process introduces visual artifacts:

These artifacts change from frame to frame, creating visual variation even in static scenes.

Real-world environments are rarely motionless.

Even in empty scenes:

To humans, these details fade into the background.

To cameras, they are measurable changes.

This is why “background” is not as simple as it sounds.

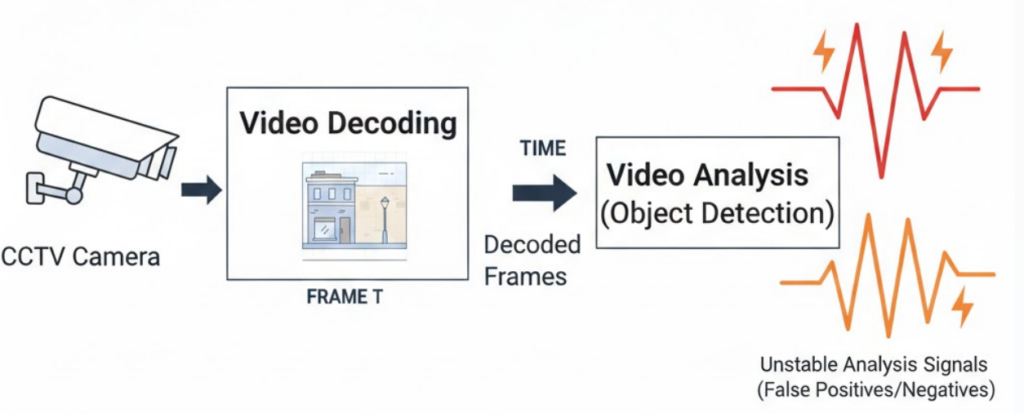

In real surveillance pipelines built with tools like:

these small variations appear constantly.

Without proper handling, they can lead to:

This is not a model issue — it’s a perception characteristic of video itself.

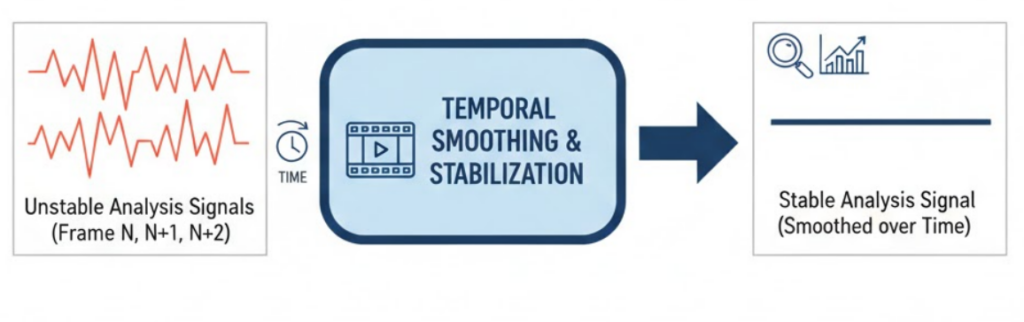

Well-designed surveillance systems do not treat every pixel change as meaningful.

Instead, they observe patterns across time.

Short-lived fluctuations are absorbed.

Persistent changes are evaluated further.

By understanding what normally changes in a scene, systems learn what can safely be ignored.

This is how stability emerges from noisy input.

Once we realize that stillness is only a human perception, many system behaviors become clearer.

Why systems wait before reacting.

Why alerts are filtered.

Why time-based analysis is essential.

Surveillance systems are not sensitive because they are weak — they are cautious because the visual world is noisy.

A scene that appears still to humans is never truly still to a camera.

Understanding this helps explain why surveillance systems must carefully separate meaningful activity from visual noise.

In the next chapter, we’ll explore how systems begin distinguishing real movement from background change — and how motion becomes meaningful.

Next in Series: When Motion Isn’t Really Motion