Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Foundations of Smart Surveillance

Previous: https://varsity.thopps.com/the-cost-of-too-many-alerts

A complete system view of how intelligent surveillance actually works.

Throughout this series, we’ve examined many parts of intelligent surveillance.

Detection.

Tracking.

Time.

Confidence.

Event lifecycles.

Suppression.

Alert prioritization.

Each solves a real technical problem.

But intelligence does not emerge from individual mechanisms.

It emerges when they operate as a coordinated system.

In this final article, we connect those pieces into a complete technical view —

showing what intelligence looks like when the pipeline is designed end-to-end.

No single algorithm creates intelligence.

A detector alone only produces bounding boxes.

A tracker alone only maintains IDs.

A rules engine alone only evaluates conditions.

Individually, these components are correct.

Collectively — without orchestration — they produce chaos.

Real intelligence appears only when system boundaries are defined:

This is a systems problem, not a model problem.

The first layer operates entirely at frame level.

Technically, this includes:

This layer answers only one question:

What appears in this frame?

No memory exists here.

Each frame is stateless by design.

This is intentional — perception must remain fast, parallel, and disposable.

But perception alone cannot support decision-making.

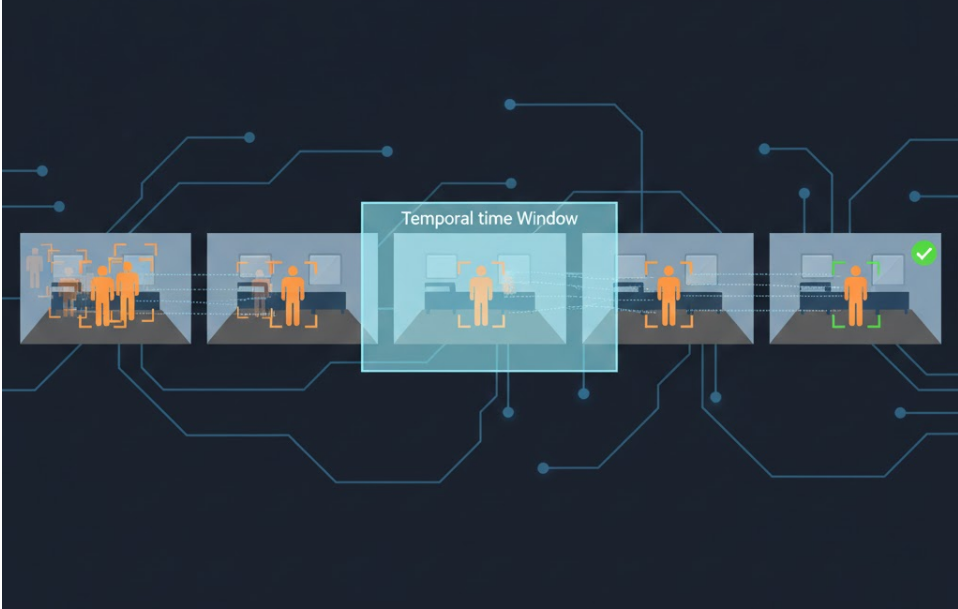

The second layer introduces time.

Here, the system begins accumulating observations using:

Transient detections are filtered automatically.

If an object appears for only 2–3 frames, it never progresses further.

Only detections that persist beyond temporal thresholds remain eligible.

This is the system’s first stability gate.

Time becomes the first filter.

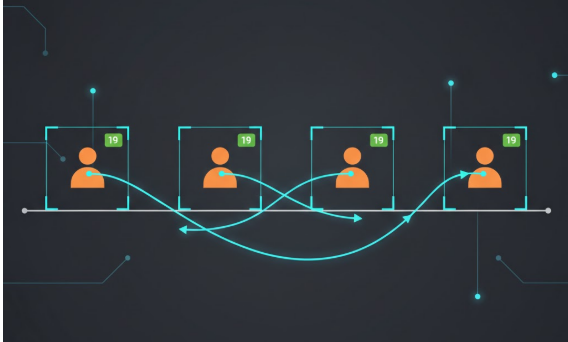

Once persistence exists, identity matters.

Tracking algorithms (DeepSORT, ByteTrack, OC-SORT) assign consistent IDs across frames.

Technically, this enables:

The system can now ask:

Behaviour cannot exist without identity continuity.

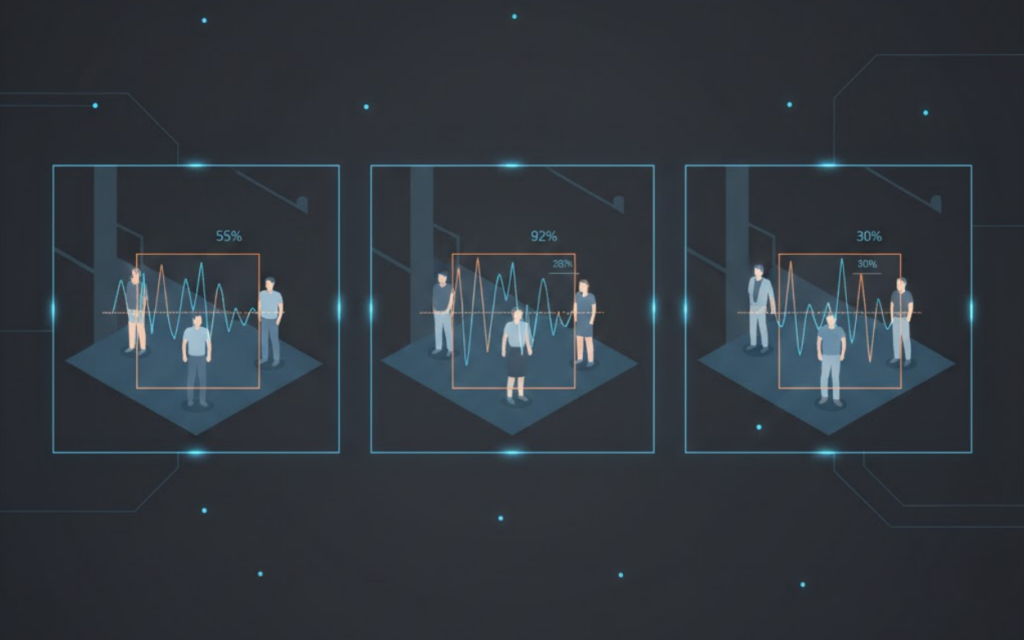

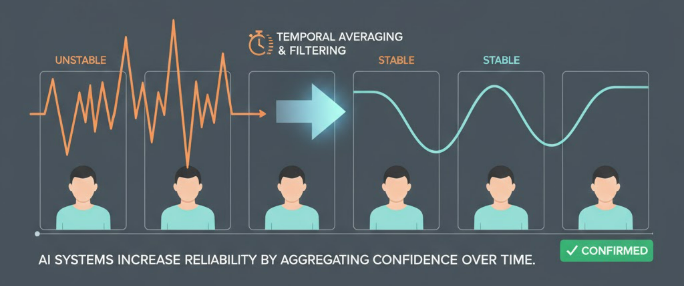

Frame-level confidence is volatile by nature.

Lighting changes.

Occlusions occur.

Angles shift.

Reacting to instantaneous confidence creates alert jitter.

Instead, mature systems compute:

Confidence is accumulated across time windows.

An alert becomes eligible only when confidence remains stable for a defined duration.

These transforms fluctuating signals into reliable judgment.

At this stage, the system no longer treats detections as isolated.

Events become structured processes.

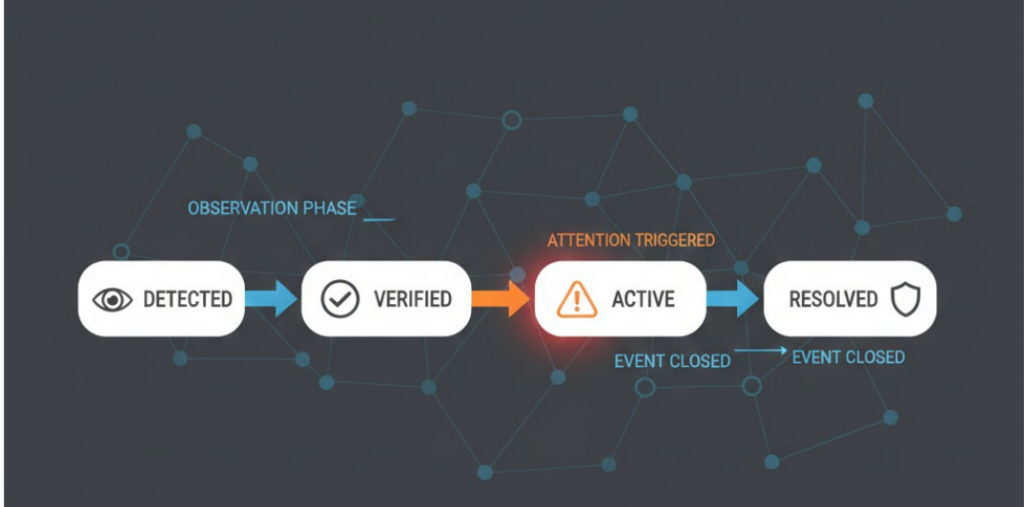

Each event progresses through states:

Technically implemented using:

Alerts are allowed only at specific transitions — not continuously.

This prevents repeated notifications for unchanged conditions.

The system now understands event evolution, not moments.

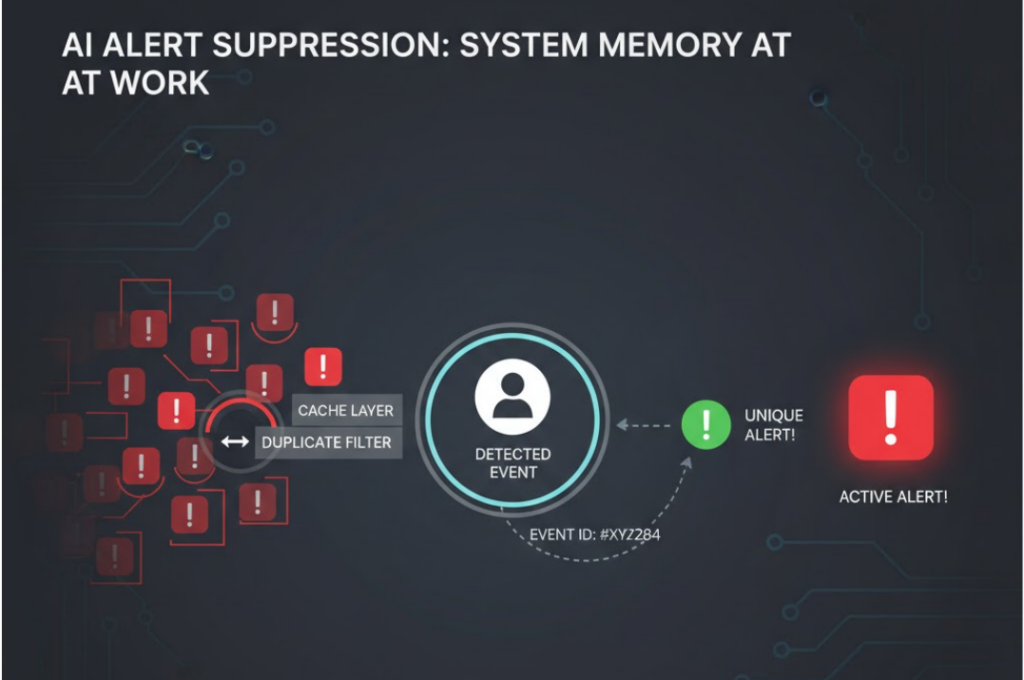

Without suppression, a single active event may generate alerts every second.

To prevent this, systems introduce memory.

Common implementations include:

If an alert has already been issued for a given event ID, subsequent triggers are suppressed until expiration.

One situation produces one alert.

Not twenty.

Silence becomes an engineered behaviour.

Only after all previous layers does alerting become possible.

An alert is emitted only if:

This final gate is intentional.

Alerting is not detection output.

It is a system-level decision.

Notice where intelligence actually appears.

Not in detection accuracy.

Not in model architecture.

Not in inference speed.

It appears at the boundaries between layers:

Each boundary filters uncertainty.

Each layer reduces noise.

Each decision is delayed until evidence accumulates.

This is how systems learn restraint.

When the system is complete, intelligence feels quiet.

Most detections vanish.

Most motion fades.

Most frames are forgotten.

Only meaningful situations survive the pipeline.

And when an alert finally appears, it is:

The system does not shout.

It speaks deliberately.

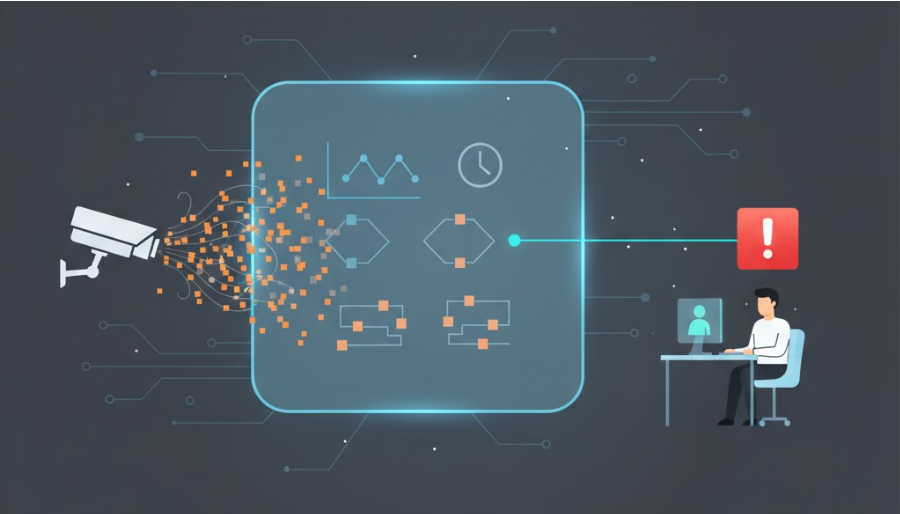

Intelligence in surveillance does not come from detecting more events.

It comes from deciding which events matter.

When perception, time, memory, and logic work together, noise is filtered naturally and alerts become deliberate rather than reactive.

The goal is not faster responses, but better ones.

A system that waits, verifies, and suppresses unnecessary repetition produces fewer alerts — and more trust.

That is what intelligence looks like when everything works together.

Throughout this series, we saw that intelligence is not created by detection alone.

It emerges when time, memory, and logic work together to turn signals into decisions.

That coordination is what defines real surveillance intelligence.

First in series: https://varsity.thopps.com/smart-camera-vs-smart-surveillance