Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

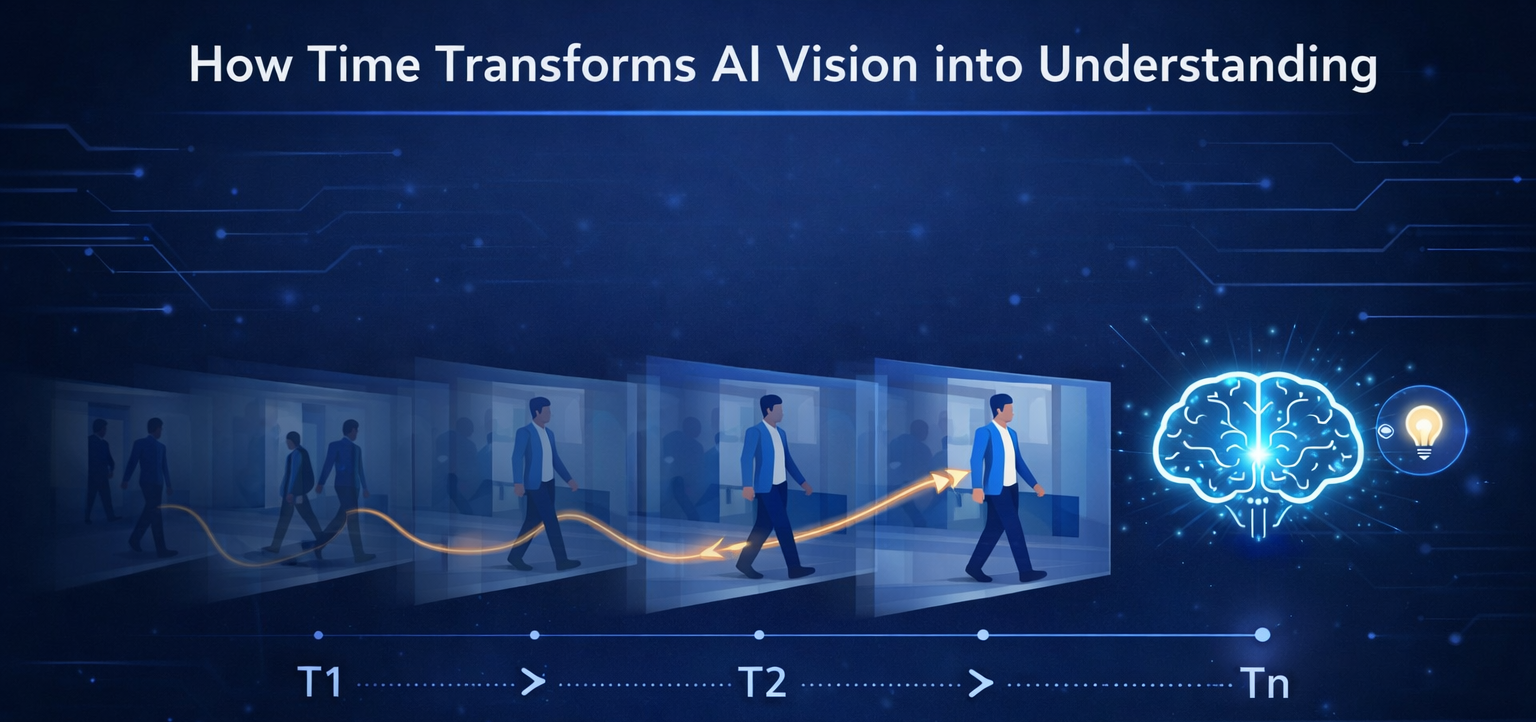

Learning Series: Foundations of Smart Surveillance

Previous: https://varsity.thopps.com/the-three-layers-of-vision-intelligence

How duration, repetition, and patterns transform vision into understanding

In most AI discussions, we talk about accuracy.

Better models.

Better detection.

Higher confidence scores.

But in real surveillance systems, accuracy alone rarely decides whether an alert is useful.

Time does.

Because surveillance is not about what appears —

it’s about what unfolds.

A camera frame can show a person standing near a door.

That frame might look perfectly normal.

But what if the person stays for 30 seconds?

What if they stay for 10 minutes?

What if they return again and again?

Nothing in the pixels changed.

Only time did.

And yet, the meaning completely transformed.

This is why surveillance problems are not image problems —

they are temporal problems.

Detection models like YOLOv8, YOLO-World, or OpenVINO models operate on individual frames.

Each frame is processed independently.

frame → detection → output

No memory.

No history.

No understanding.

To introduce time, systems must add an entirely new layer:

temporal memory.

This is usually built outside the model.

Real pipelines look more like this:

RTSP stream

↓

FFmpeg frame capture

↓

YOLO detection

↓

Tracker (Deep SORT / Byte Track)

↓

Temporal logic engine

↓

Event generation

Time is not learned by the model it is engineered into the system.

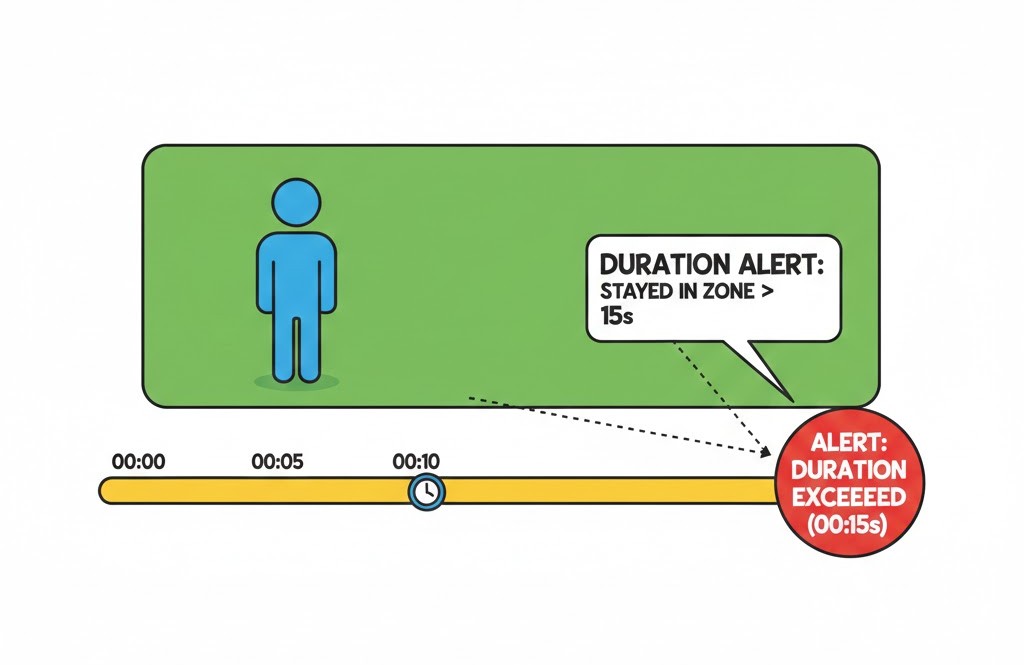

Duration answers a simple but powerful question:

How long did this continue?

In real systems, each tracked object maintains:

Example

Person #12 entered at 10:02:15

Current time: 10:07:30

Duration: 5 minutes 15 seconds

This enables detection of:

Tech-wise, this is often implemented using:

Nothing visual changes but behaviour emerges

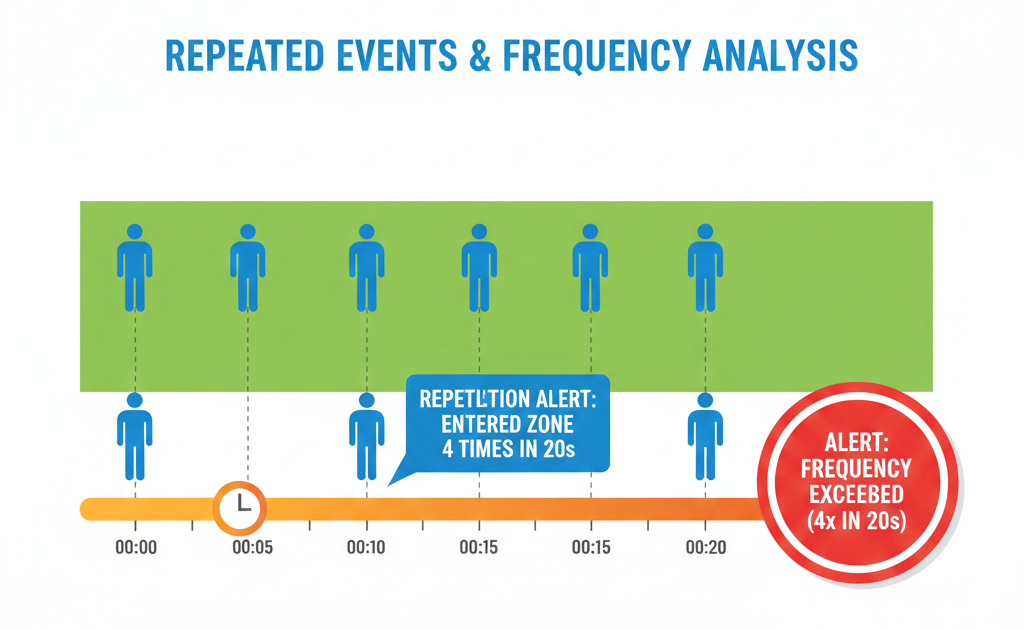

Some behaviours are not suspicious because they last long.

They become meaningful because they repeat.

Examples:

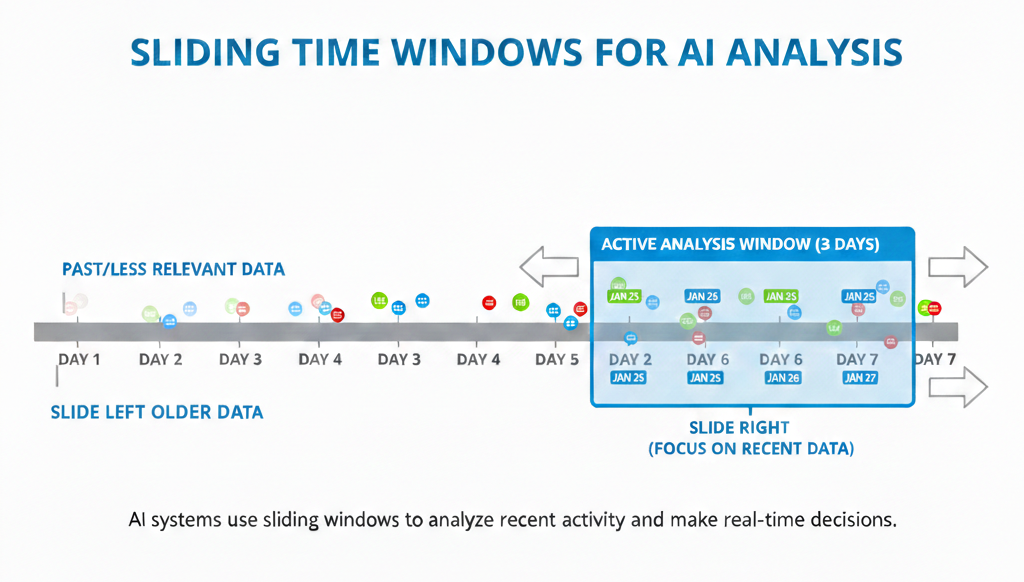

To detect this, systems use temporal windows.

Instead of storing everything forever, they ask:

“What happened in the last 30 seconds?”

“What happened in the last 5 minutes?”

This is called a sliding time window.

Technically implemented using:

Frequency transforms isolated actions into behaviour patterns.

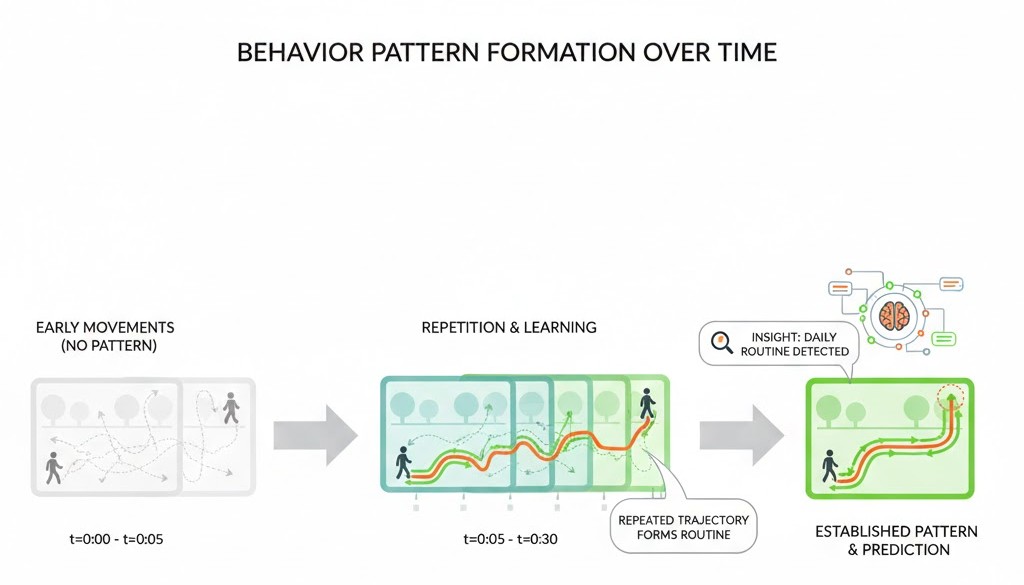

Patterns appear when duration and frequency combine.

This is where intelligence truly begins.

Patterns look like:

At this stage, the system is no longer reacting.

It is comparing the present with the past.

This logic is often built using:

Not everything requires machine learning.

Many powerful systems rely on structured temporal logic.

One hidden concept in surveillance is state.

An object is not just detected —

it is in a state.

For example:

State transitions happen only when time conditions are met.

This allows the system to reason:

“The person entered, stayed longer than allowed, and did not exit.”

That’s not vision.

That’s reasoning built on time

Instant reactions create false alerts.

Real systems intentionally wait.

They:

This is why mature surveillance platforms feel calmer.

They don’t shout every second.

They wait until time confirms intent.

Time acts as a filter against noise.

AI surveillance doesn’t fail because it cannot see clearly.

It fails because it doesn’t observe long enough.

Time introduces:

Duration reveals persistence.

Frequency reveals repetition.

Patterns reveal intent.

And once time becomes part of the system, vision stops reacting —

and starts understanding.

If time helps systems understand behaviour, the next challenge is even harder:

How does AI decide what is normal — and what is abnormal?

In the next article, we’ll explore how surveillance systems define normal behaviour, detect anomalies, and why “unusual” is one of the hardest problems in artificial intelligence

Next in series: What Looks Normal — Until It Isn’t