Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Foundations of Smart Surveillance

Previous: https://varsity.thopps.com/from-moments-to-meaning-the-importance-of-time-in-ai-vision

How duration, repetition, and patterns transform vision into understanding

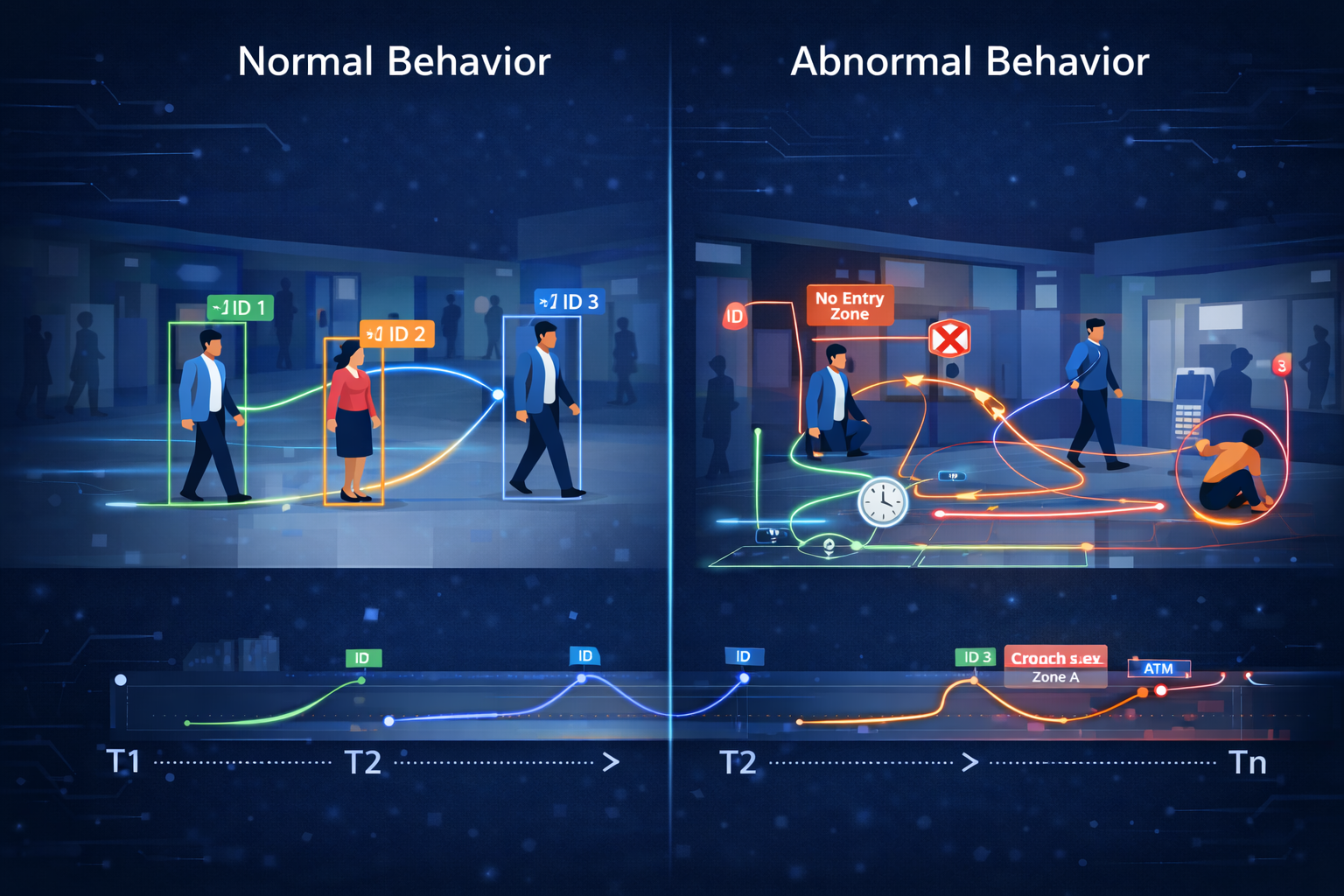

At first glance, abnormal behaviour seems easy to define.

Someone running.

Someone stopping suddenly.

Someone entering a restricted area.

But real surveillance systems don’t work this way.

Because the same action can be normal — or concerning — depending on context.

And context is not visible in a single frame.

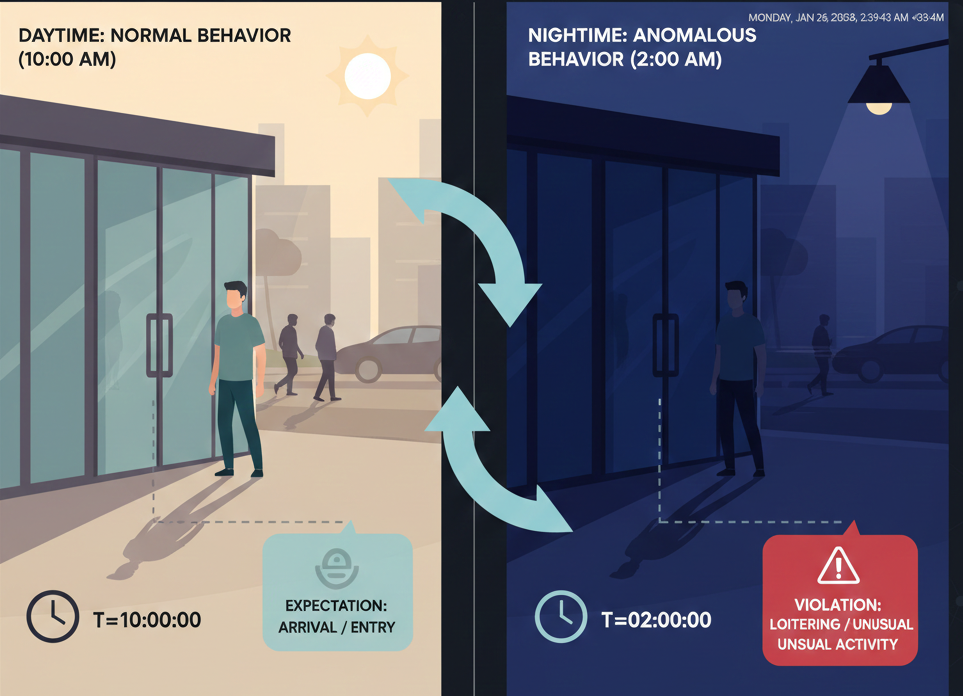

A person walking at 11 AM is normal.

The same movement at 3:30 AM may not be.

A person standing near a door for a few seconds is fine.

Standing there for several minutes changes the meaning.

Nothing changed visually.

Only the expectation did.

That’s the first lesson of intelligent surveillance.

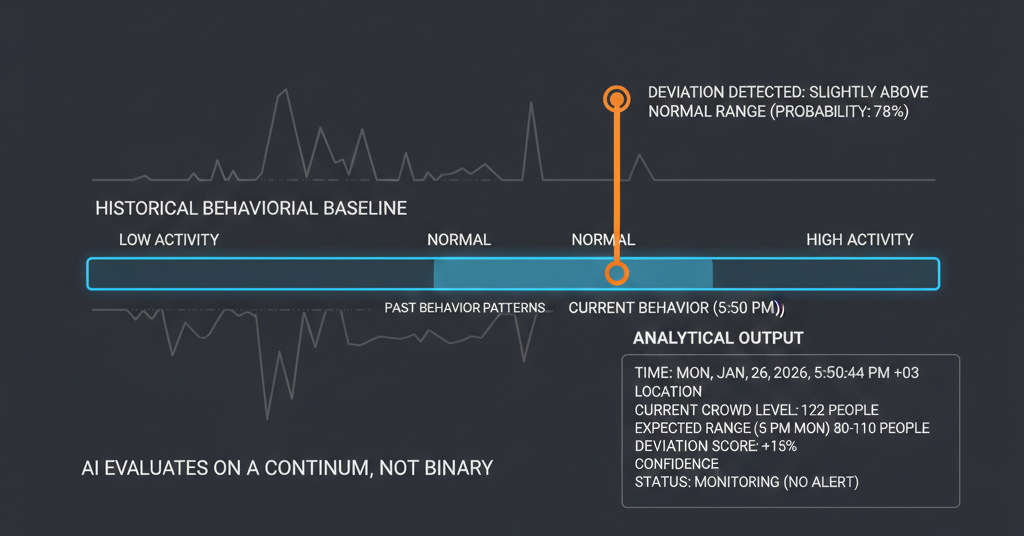

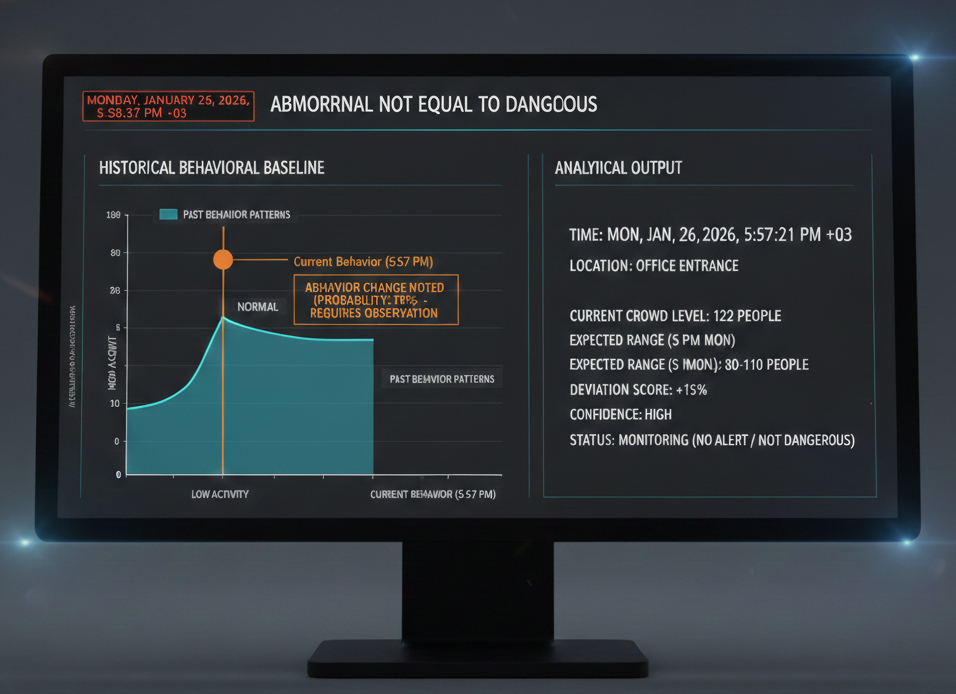

A common misconception is that abnormal behaviour simply means something uncommon.

In real systems, rarity is irrelevant.

Deviation is what matters.

Surveillance systems don’t ask:

“Have I seen this before?”

They ask:

“Is this different from what usually happens here?”

This shift in thinking changes everything.

Normal behaviour is rarely configured manually.

Instead, systems observe quietly over time.

They learn:

From this, a baseline forms.

Not a strict rule — but a reference.

In real deployments, these baselines are often stored using:

Normal is learned, not defined.

There is no universal normal.

Normal depends on:

A crowded lobby is expected during office hours.

The same crowd at midnight is not.

That’s why modern systems maintain time-aware baselines, not one global model.

Behaviour only appears through time.

Systems measure:

Technically, this is handled using:

Without time, behaviour cannot exist — only motion.

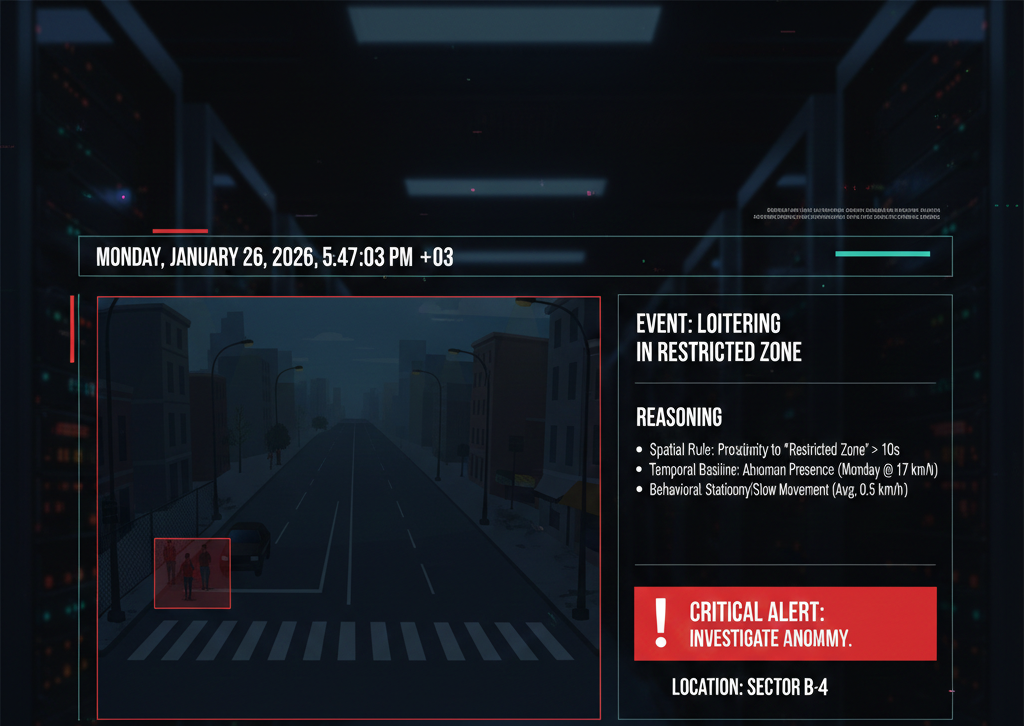

Another hidden truth:

Surveillance systems don’t decide with certainty.

They estimate deviation.

Instead of saying:

“This is wrong.”

They say:

“This is outside the usual range.”

This allows systems to stay cautious rather than accusatory.

Human review always comes later.

Used widely in offices, factories, and access-controlled areas.

Examples:

Typically built using:

Used in larger environments like campuses or public spaces.

Live behaviour is compared against historical patterns.

Deviation beyond tolerance is flagged.

Often supported by:

Abnormality emerges from difference — not appearance.

An anomaly simply means:

“Something changed.”

Not every change is a threat.

Good surveillance systems highlight attention —

they do not make accusations.

Normal behaviour is learned over time.

Abnormal behaviour is not rare behaviour.

It is behaviour that deviates from expectation — shaped by history, time, and context.

When systems understand expectation, surveillance stops reacting blindly

and starts reasoning intelligently.

Once behaviour is flagged as abnormal, the next challenge appears:

How does the system decide what action to take next?

In the next article, we’ll explore how surveillance systems move from observation to decision — using rules, states, and reasoning pipelines.

Next in series: Why Intelligence Cannot Be Hard-Coded