Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Contact Us

WhatsApp

+65 8012 2467

Email Us

contactus@thopps.com

Learning Series: Foundations of Smart Surveillance

Previous: https://varsity.thopps.com/what-looks-normal-until-it-isnt

How surveillance systems decide what actually matters

At some point, every intelligent system faces the same question:

“I see something unusual… now what?”

Detection can identify objects.

Tracking can follow movement.

Behaviour analysis can spot deviation.

But none of these can decide whether action is required.

That decision happens somewhere else.

And most people don’t realize where.

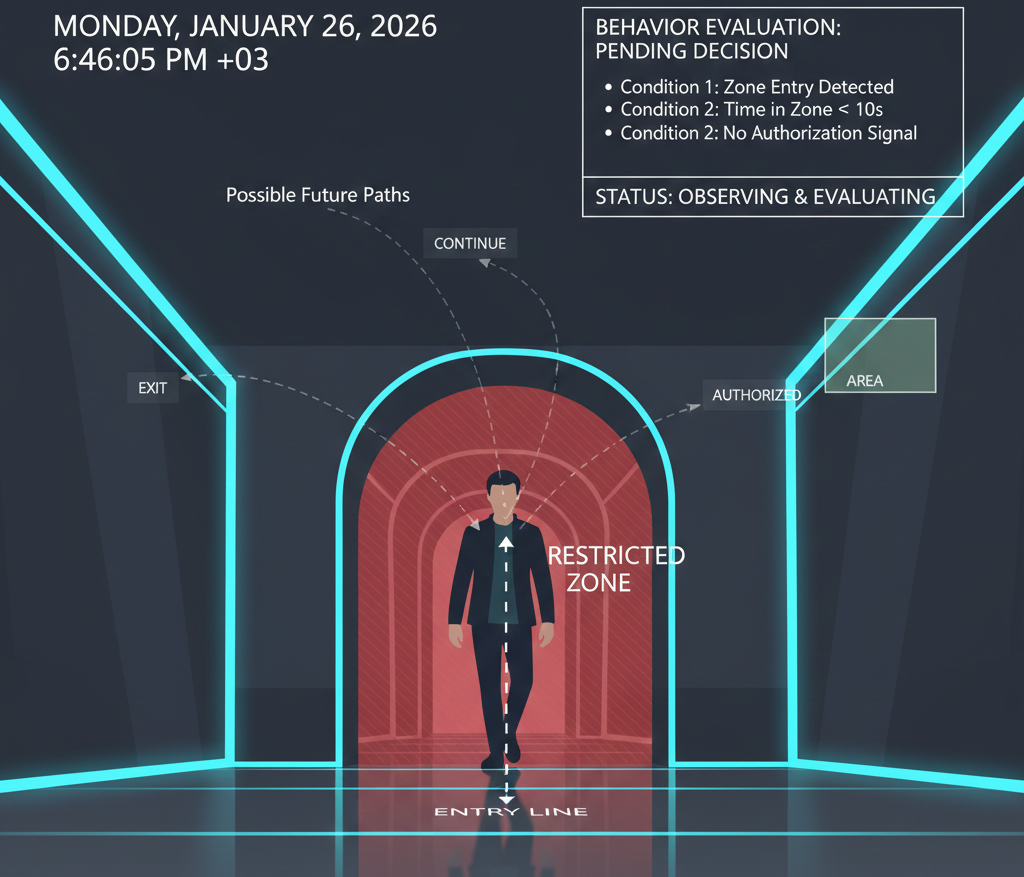

Imagine this situation:

A person enters a restricted corridor.

The system detects them instantly.

But should it alert?

What if:

Triggering an alert immediately would be wrong.

Waiting too long would also be wrong.

This tension is where intelligence truly begins.

Early surveillance systems relied entirely on rules.

Simple logic such as:

These rules still power many real deployments today.

For example:

Rules work because they are:

But rules alone are fragile.

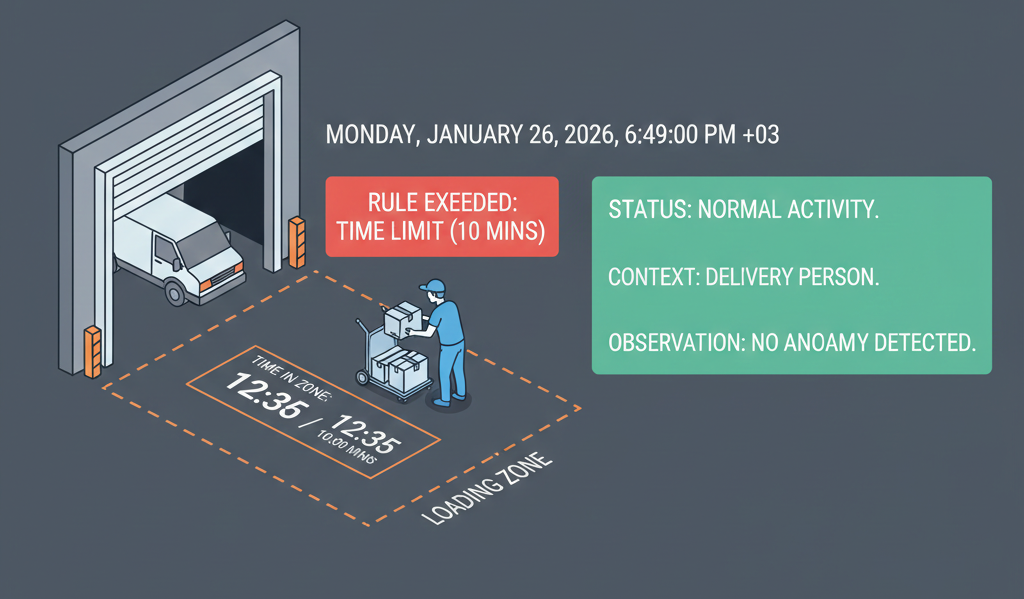

Now imagine this:

A delivery person enters a loading area.

Stays for 12 minutes.

Leaves.

The rule says “alert after 10 minutes.”

But the behaviour was normal.

The system followed the rule —

but failed the situation.

This is where systems must evolve.

Instead of asking only what happened,

systems begin tracking what stage something is in.

This is called state.

For example:

Now the system understands sequence.

Not just duration — but progression.

This is how modern systems avoid premature alerts.

Intelligence doesn’t come from one signal.

It comes from combining many weak signals.

Examples:

Individually, each signal is harmless.

Together, they tell a story.

This is how systems detect:

None of these rely on a single detection.

They rely on relationships over time.

In real-world deployments, reasoning layers are often built using:

Detection feeds data.

Reasoning interprets it.

This separation is intentional — and critical.

You could try to train a model to decide everything.

But then:

That’s dangerous.

Which is why enterprise systems keep reasoning explicit.

When an alert fires, operators must know why.

Transparency is part of intelligence.

Good systems do something surprising.

They hesitate.

They wait for confirmation.

They observe persistence.

They validate sequence.

This hesitation prevents noise.

It’s why mature systems feel calm —

and immature ones feel chaotic.

Rules define boundaries.

Reasoning understands situations.

True intelligence does not react instantly — it evaluates context before acting.

This is the difference between automation

and decision-making.

Even with good reasoning, many systems still fail.

Not because they detect incorrectly — but because they alert too much.

In the next article, we’ll explore why alerts fail in the real world, how false positives erode trust, and how mature systems reduce noise before acting.

Next in series: The Cost of Too Many Alerts